Hi All,

Maybe I chose a difficult competition after completing Part 1 of Fast AI course, but I am going to stick to Jeremy’s suggestion to focus and deliver one project and compile a blog on it! So, I am on the case…

Here is the link to Understanding Clouds from Satellite Images competition.

I haven’t reached the model computation part as I want to make sure I can visualise the data and understand before I apply standard Fast AI approaches. But I am having a few issues on showing data after Masking.

In Lesson 3 (camvid), Jeremy showed that a mask can be passed via get_y_fn. However, the advantage here was that the mask already had consolidated all the codes (per pixel). In this competition, we have overlapping masks which means either extension to Fast AI libs is necessary or we consolidate masks ourselves.

Post like this from experts describes where people have extended the Fast AI codebase… I am still not 100% there with that they have done, so I wanted to run a model first on my second option: consolidate masks ourselves.

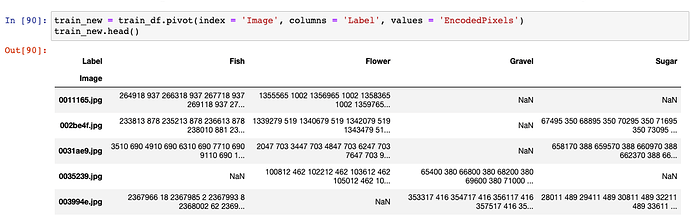

Based on suggestions from forums, I have pivoted the input data into a dataframe where I now have image as the index and 4 labels as columns, while the content is rle.

I used the following function to generate and consolidate multiple masks into a single one:

def generate_save_masks_new():

#Traverse through train_new dataframe

for index_image, image_row in train_new.iterrows():

#print(train_new.loc[index_image,:])

rles = train_new.loc[index_image, :]

shape = open_image(train_path/index_image).shape[-2:]

final_mask = torch.zeros((1, *shape))

for k, rle in enumerate(rles):

if isinstance(rle, str):

mask = open_mask_rle(rle, shape).px.permute(0, 2, 1)

final_mask += (2**k)*mask

Image(final_mask).save(mask_new_path/index_image)

(Source of inspiration is here.) Thanks @florobax

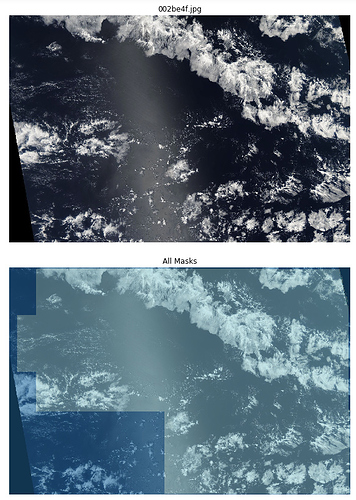

Once I run the above, I have files saved on my server which are basically masks. If I open one of these, here is the output:

Question 1: How can I view multi coloured boxes based on different mask values in show function… I can use cmap but how do I base it on different labels?

Next I built get_y_fn as follows:

get_y_fn = lambda x: mask_new_path/x.name

which outputs the Posix path to the consolidated masked image above, corresponding to the training image.

Next I tried to create my databunch:

size = size//2

bs = 16src = (SegmentationItemList.from_folder(train_path).split_by_rand_pct(0.2) .label_from_func(get_y_fn, classes=[1,2,4,8,16]))

data = (src.transform(get_transforms(), size=size, tfm_y=True).databunch(bs=bs) .normalize(imagenet_stats))

data.show_batch(2, figsize=(10,7))

And the last line throws an error: cuda runtime error (710) : device-side assert triggered.

I have CUDA_LAUNCH_BLOCKING flag to 1, and it points at show_batch.

Googling about this error, I realised there could be a problem with my classes, but I changed that to 0,1,2,3,4 and 0,2,4,6,8 and a few more but it throws this error constantly.

Question 2: How can I see various classes in my mask across all images?

I would appreciate if someone can guide me as its been sometime I am at it and clueless on how to move forward.

Regards,

Dev.