I just stumbled across the captum model interpretability library, and it looks really interesting. I don’t have any experience with all these algorithms for interpretability, has anyone tried it out already?

Certainly looks interesting! And shouldn’t be too hard to get working with the library

Here is some chat along a similar topic-on shap- by @muellerzr

Check here:

Thanks @init_27, do note that I only ported over the tabular functionalities from SHAP however, as for images IMO fastai has better ones

I’m playing with it right now, will need to read up on some of the algorithms though. Think this might be a valuable callback to add, especially with the captum insights visualizer.

Look into how they ported in Neptune. The long term plan is to have seperate sub-modules we can install and use, and an interpretability submodule with all of them combined would be fantastic! (SHAP, Captum, etc)

That’s great! Please let us know how the experiments go & if we can help with any part.

Captum has been on my to-try list for a long while.

We’re very keen to see a Captum callback!

Wow, the field of machine learning Interpretability is fascinating, I’m quite overwhelmed by all information to be honest.

I’ve read these articles from distill.pub:

The Building Blocks of Interpretability

Feature Visualization

Visualizing the Impact of Feature Attribution Baselines

I also went through all tutorials on the Captum github, and the library seems quite flexible and implements many of the published “Feature Attribution” algorithms (ie. which parts of the input to a model/layer cause the biggest change in its ouput).

Then there is the other important technique mentioned in these articles “Feature Visualization”, ie what input maximizes/minimizes a specific layer/neuron etc. (DeepDream is an example of this)

I think the challenge here is not writing a callback that exposes these functionalities, but rather to select sensible and robust defaults/algorithms as there are so many choices.

I’ll continue reading up on the literature for a bit, and then start experimenting on Imagenette I think, if anyone comes across interesting blogposts/papers/libraries please share them here

There’s also the tensorflow lucid library/notebooks, which contains code for the distill articles and more techniques for model visualization and interpretation, as well as a lot more recommended reading, which I’ll definitely check out tomorrow.

Thanks a lot for the article list. I am new to fastai/pytorch but I am super interested in interpretability. Would love to follow what you find as you dig deeper. Hopefully I will be able to get up to speed and try it out myself in due time during the course.

Here is lime interpretability. Just sharing in case this helps some one.

Couldn’t find “saliency maps” implementation using fastai.

Now added to the unofficial fastai extensions repository

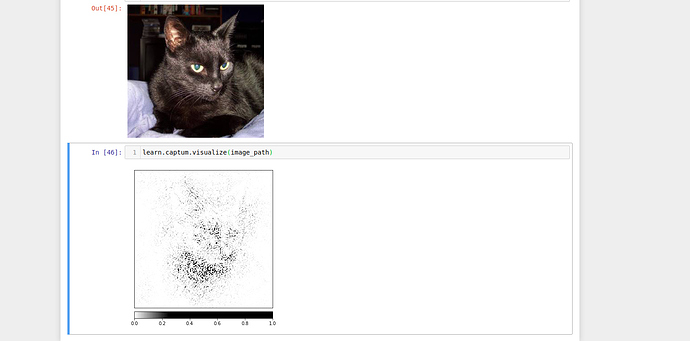

I just tried to create a call back. Not being that experienced with the code, it took some time. I have created one with just takes integrated gradients. It gave good results in interpreting why it opted to choose the item as Cat or Dog. I know Jeremy or Sylvian will have to spend time to refactor.

Submitting a PR for this.

Thanks for creating this

I think we should some more experimenting before proposing it for merging into fastai. I’m reading through the lucid notebooks just now, and will try to implement some of the functionalities in pytorch/fastai.

Then together with Captum I think we can create a nice explainability addon.

I’ll share my progress tonight and also create a public git repo if you are interested in collaborating on this (mind, I don’t know much about “model interpretability” yet)

Yes. I am interested. Count me in. I also don’t know much. Just trying it out.

What timezone are you in. We can discuss on this in the zoom if possible? I am in IST timezone

I am in the UK, so GMT (5 and a half hours behind you I think).

When do you have time?

I guess your morning time might be a good time to connect. Anytime before 2PM GMT is fine for me…

That sounds great. I have a submission due tomorrow, but Friday or Saturday would be good for me

saliency maps using flash torch