Is .fastai automatically created in the home directory? If it is, is there an option to relocated that? Because, in the system where I work, we have very limited home space and most of the data/models are stored in an external mount.

There’s a confit file where you can redirect those folders, fear not

Thank you very much!  I’m guessing details of how to set these up will be in docs (now or soon).

I’m guessing details of how to set these up will be in docs (now or soon).

Yes it is indeed documented:

Is there a backwards pretrained URLs.WT103 model available yet?

I don’t think so. @sgugger said he’ll work on it in the near future.

Does it mean that we do Multitask Learning when we use n_labels > 1?

Let’s say, my dataset has the following columns (no headers):

- 10 columns which correspond to 10 different classes to which we can classify each document (each label is 0 or 1 so that we could use Sigmoid to predict each), ex.: sad, funny, touching, offensive, well_written, disapproving, interesting, insightful, entertaining, provocative

- 1 column with the full text of the document at the end.

The problem is that each document can incorporate many labels at the same time, which is why Softmax would be a bad choice. I could, of course, train 10 classifiers with Sigmoid output layer separately, but Multitask Learning would be a better option. How could I adapt RNNLearner to reflect this Multitask Problem?

Also, is the following definition of TextDataset and TextClasDataBunch correct for my case?

-

train_ds = TextDataset.from_csv(folder=path, name="train", n_labels = 10) -

data_clas = TextClasDataBunch.from_csv(path=path, train = "train", valid="valid", test="test", vocab = data_lm.train_ds.vocab, bs=32, n_labels = 10)

Thanks a lot in advance!

If you pass multiple labels, the RNNLearner will be adjusted in consequence. As you pointed out it will use sigmoid instead of softmax, but you normally don’t have to do anything.

Thanks a lot! So it is Multitask learning then, correct? because for each label (column) out of all labels defined by n_labels RNNLearner.classifier(data_clas) learns 1 sigmoid classifier, but it is not trained separately, but for all 10 mini-classifiers at once. I am asking because you sad earlier here that we would need to build a custom model for it.

I am also a little confused about the classes argument. If I always define my labels (per column) in a binary fashion, what should I pass to this argument?

- For example:

classes = ['no', 'yes']? - or rather: classes = [sad, funny, touching, offensive, well_written, disapproving, interesting, insightful, entertaining, provocative]

- or can I skip this

classesargument entirely?

Sorry for so many questions at once.

I run through the docs periodically as I git pull commits from the repo. Currently, I ran the IMDB example from the docs. The LM part ran very smoothly but I got an error when I ran the classifier part. In particular, when I called the fit_one_cycle method after creating the RNNLearner.classifier and loading the encoder via load_encoder, I got the following error:

learn.fit_one_cycle(1, 1e-2)

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-25-3ea49add0339> in <module>

----> 1 learn.fit_one_cycle(1, 1e-2)

~/fastai/fastai/train.py in fit_one_cycle(learn, cyc_len, max_lr, moms, div_factor, pct_start, wd, callbacks, **kwargs)

17 callbacks.append(OneCycleScheduler(learn, max_lr, moms=moms, div_factor=div_factor,

18 pct_start=pct_start, **kwargs))

---> 19 learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

20

21 def lr_find(learn:Learner, start_lr:Floats=1e-7, end_lr:Floats=10, num_it:int=100, stop_div:bool=True, **kwargs:Any):

~/fastai/fastai/basic_train.py in fit(self, epochs, lr, wd, callbacks)

160 callbacks = [cb(self) for cb in self.callback_fns] + listify(callbacks)

161 fit(epochs, self.model, self.loss_func, opt=self.opt, data=self.data, metrics=self.metrics,

--> 162 callbacks=self.callbacks+callbacks)

163

164 def create_opt(self, lr:Floats, wd:Floats=0.)->None:

~/fastai/fastai/basic_train.py in fit(epochs, model, loss_func, opt, data, callbacks, metrics)

92 except Exception as e:

93 exception = e

---> 94 raise e

95 finally: cb_handler.on_train_end(exception)

96

~/fastai/fastai/basic_train.py in fit(epochs, model, loss_func, opt, data, callbacks, metrics)

82 for xb,yb in progress_bar(data.train_dl, parent=pbar):

83 xb, yb = cb_handler.on_batch_begin(xb, yb)

---> 84 loss = loss_batch(model, xb, yb, loss_func, opt, cb_handler)

85 if cb_handler.on_batch_end(loss): break

86

~/fastai/fastai/basic_train.py in loss_batch(model, xb, yb, loss_func, opt, cb_handler)

16 if not is_listy(xb): xb = [xb]

17 if not is_listy(yb): yb = [yb]

---> 18 out = model(*xb)

19 out = cb_handler.on_loss_begin(out)

20

/net/vaosl01/opt/NFS/sw/anaconda3/envs/mer-su0/lib/python3.7/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

475 result = self._slow_forward(*input, **kwargs)

476 else:

--> 477 result = self.forward(*input, **kwargs)

478 for hook in self._forward_hooks.values():

479 hook_result = hook(self, input, result)

TypeError: forward() takes 2 positional arguments but 927 were given

Please note that I just followed the docs directly without any modifications.

If you pass n_labels = 10, the model should have a last linear layer with 10 outputs and loss function that is binary cross entropy with logits (so sigmoid + cross entropy). I say should because it’s untested.

The classes should be the names of each of your label, so the second solution you offered, but you can skip it entirely.

If AWD-LSTM is the basic Langugae Model in fastai_v1, is there an equivalent for sequence tagging/entity recognition in fastai_v1?

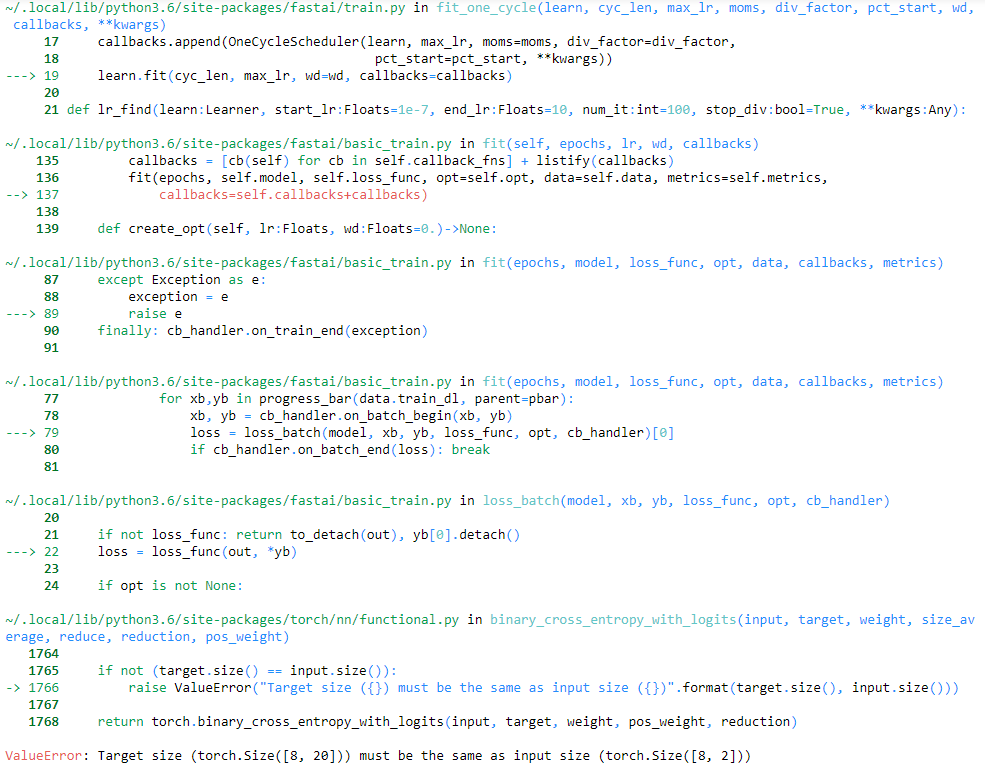

Thank you very much sgugger! I tested this on my dataset (in fact, I have 20 labels, not 10).

I was able to train LM without problems, but when I train RNNLearner.classifier I get the following error:

-

ValueError: Target size (torch.Size([8, 20])) must be the same as input size (torch.Size([8, 2]))when I set the batch size to 16. -

ValueError: Target size (torch.Size([16, 20])) must be the same as input size (torch.Size([16, 2]))when I set the batch size to 32.

I ran this twice:

- With dummy label column set to

[0]*len(df): link to my GitHub file - Without any dummy column: link to my GitHub file

Am I doing something wrong? And which version is better - 1 or 2? It looks like it would work only if there are just 2 outputs in the last linear layer for the classifier, cause now it cannot match input with the size of 2 and output (target) of the classifier with the size of 20.

Here is also a screenshot:

Btw., I use fastai version 1.0.14 (in new 1.0.15

TextDataset doesn’t even have the method from_csv())

I would appreciate any advice!

It’s fixed now. Someone had changed the way DataBunch handles collate_fn so it broke down this part.

@annanana: this was fixed in 1.0.15 - RNNLearner.classifier should work with a multilabel dataset

https://github.com/fastai/fastai/blob/master/CHANGES.md#1015-2018-10-28

This was purposefully done - to reduce redundancy b/w from_csv() and from_df() - you should be using a TextDataBunch here (and access the whatever parts of the dataset you need from the data_bunch)

Please note a lot of changes in master in NLP, all detailed here.

Code for creating TextLMDataBunch that was working before gives an error now:

data_lm = TextLMDataBunch.from_df(PATH, train_df=train, valid_df=valid, max_vocab=60_091, min_freq=2,\

text_cols=['name', 'item_description'], label_cols=['labels'])

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<timed exec> in <module>

~/fastai/fastai/text/data.py in from_df(cls, path, train_df, valid_df, test_df, tokenizer, vocab, classes, text_cols, label_cols, label_delim, **kwargs)

341 "Create a `TextDataBunch` from DataFrames."

342 dfs = [train_df, valid_df] if test_df is None else [train_df, valid_df, test_df]

--> 343 src = TextSplitData(path, *[TextLabelList.from_df(path, df, text_cols, label_cols, label_delim) for df in dfs])

344 return cls.create_from_split_ds(src.datasets(classes=classes), **kwargs)

345

~/fastai/fastai/text/data.py in <listcomp>(.0)

341 "Create a `TextDataBunch` from DataFrames."

342 dfs = [train_df, valid_df] if test_df is None else [train_df, valid_df, test_df]

--> 343 src = TextSplitData(path, *[TextLabelList.from_df(path, df, text_cols, label_cols, label_delim) for df in dfs])

344 return cls.create_from_split_ds(src.datasets(classes=classes), **kwargs)

345

~/fastai/fastai/data_block.py in from_df(cls, path, df, input_cols, label_cols, label_delim)

134 """

135 inputs, labels = _extract_input_labels(df, input_cols, label_cols, label_delim=label_delim)

--> 136 return cls.from_lists(path, inputs, labels)

137

138 @classmethod

~/fastai/fastai/data_block.py in from_lists(cls, path, inputs, labels)

157 "Create a `LabelDataset` in `path` with `inputs` and `labels`."

158 inputs,labels = np.array(inputs),np.array(labels)

--> 159 return cls(np.concatenate([inputs[:,None], labels[:,None]], 1), path)

160

161 def split_by_list(self, train, valid):

ValueError: all the input arrays must have same number of dimensions

Also after the tokenization process I use data.save passing in a folder name so that it creates it there (other than tmp). Now after this process, when I start a new session, how do I get a TextLMDataBunch object without the tokenization process running again. It seems to run because I have the tokens stored in a folder other than tmp I think. Essentially, I would just like to get an object and then use data.load passing in the folder name to load the tokens and vocab etc.

Hello to everyone. Im looking for some advices. How should I organize my data and load it to TextClasDataBunch in case of multi (21) label probability classification (so, as result of classification I want to recieve list with length equal to 21)? Now I have a csv file contains one column with input text and 21 columns with label data - one float number for each column. Tried to create TextClasDataBunch for multilabel classification and got next error:

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-47-a3e7a9fb19b4> in <module>()

1

----> 2 data_clas = TextClasDataBunch.from_csv(path=r'drive/Colab Notebooks', csv_name='plot_plus_emo_coord.csv', vocab=new_data_lm.train_ds.vocab, bs=32, header=None, text_cols=0, label_cols=[i for i in range(1, 22)])

3 # data_clas.show_batch()

4 # data_clas.

5

/usr/local/lib/python3.6/dist-packages/fastai/text/data.py in from_csv(cls, path, csv_name, valid_pct, test, tokenizer, vocab, classes, header, text_cols, label_cols, label_delim, **kwargs)

355 test_df = None if test is None else pd.read_csv(Path(path)/test, header=header)

356 return cls.from_df(path, train_df, valid_df, test_df, tokenizer, vocab, classes, text_cols,

--> 357 label_cols, label_delim, **kwargs)

358

359 @classmethod

/usr/local/lib/python3.6/dist-packages/fastai/text/data.py in from_df(cls, path, train_df, valid_df, test_df, tokenizer, vocab, classes, text_cols, label_cols, label_delim, **kwargs)

341 "Create a `TextDataBunch` from DataFrames."

342 dfs = [train_df, valid_df] if test_df is None else [train_df, valid_df, test_df]

--> 343 src = TextSplitData(path, *[TextLabelList.from_df(path, df, text_cols, label_cols, label_delim) for df in dfs])

344 return cls.create_from_split_ds(src.datasets(classes=classes), **kwargs)

345

/usr/local/lib/python3.6/dist-packages/fastai/text/data.py in <listcomp>(.0)

341 "Create a `TextDataBunch` from DataFrames."

342 dfs = [train_df, valid_df] if test_df is None else [train_df, valid_df, test_df]

--> 343 src = TextSplitData(path, *[TextLabelList.from_df(path, df, text_cols, label_cols, label_delim) for df in dfs])

344 return cls.create_from_split_ds(src.datasets(classes=classes), **kwargs)

345

/usr/local/lib/python3.6/dist-packages/fastai/data_block.py in from_df(cls, path, df, input_cols, label_cols, label_delim)

134 """

135 inputs, labels = _extract_input_labels(df, input_cols, label_cols, label_delim=label_delim)

--> 136 return cls.from_lists(path, inputs, labels)

137

138 @classmethod

/usr/local/lib/python3.6/dist-packages/fastai/data_block.py in from_lists(cls, path, inputs, labels)

157 "Create a `LabelDataset` in `path` with `inputs` and `labels`."

158 inputs,labels = np.array(inputs),np.array(labels)

--> 159 return cls(np.concatenate([inputs[:,None], labels[:,None]], 1), path)

160

161 def split_by_list(self, train, valid):

ValueError: all the input arrays must have same number of dimensionsI have a similar problem. When I try using TextLMDataBunch I get the error NameError: name 'TextLMDataBunch' is not defined. I have uninstalled and installed fastai v1 but i still get the same error. I am working in collab

How do you install and import needed libs to collab?