Natural Language to SQL

I’m interested in creating a model for natural language (English) to SQL translation.

Motivation:

Imagine being able to take any (SQL) database or CSV (you can use sqlite to turn it into a queryable db) and ask it normal english questions and get useful answers (in the form of results).

Problem:

When querying databases, there is a necessity for familiarity with the database (schema) in order to create useful queries, in addition to experience with writing SQL (it’s a different way of thinking).

How the problem can be constrained:

- The vocab (and thus output) will only ever be SQL keywords and unique members of the schema (column names, table names, views, database names, etc.).

- The input can be constrained to only ever be a question.

- The solution can be tested for syntactic validity (you can run the query and see if it returns output).

- The problem can first require exact column / table names in the input, and then be expanded to look for similar names using a combination of WordNet and fuzzy search.

- There is a lot of depth to the problem and even solving subsets can be very useful. (such as: first only outputting

select _ from _, then allowingwhereclauses, then allowing aggregations, then allowing basic functions, such as sorting, then allowingjoins and nesting etc.)

There really isn’t a very good existing model:

The papers I’ve skimmed really aren’t that impressive. They basically just do bidirectional + teacher forcing + attention. (also they use word2vec and GloVe). We know how to do that.

I think the biggest limiting factor is quality of dataset, and WikiSQL is a great step in the right direction and I believe will be the dataset to use, in addition to WordNet.

Any interest?

Let me know if anyone is interested in working on this problem!

Resources

Datasets (GitHub Repos):

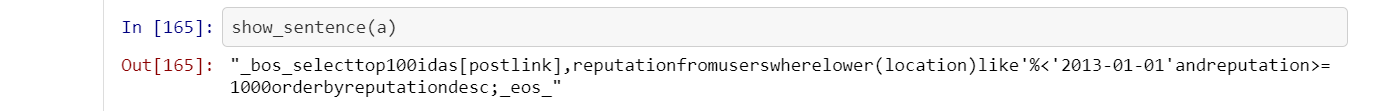

WikiSQL: English to SQL + Schema

SENLIDB: English to SQL

Papers / Models:

Neural Translation

Seq2SQL -> Review- it was rejected

SQLNet -> Pytorch Implementation