Hi all! I decided to make this post as there is not much information into this here on the forums (or really anywhere) and I wanted to share what I had found, in hopes it may help improve your tabular projects.

While exploring various tabular-related problems I began to wonder what the right hidden layer size really was. In the ADULT’s notebook, Jeremy uses [200,100] without much explanation as to why, it just works. In hoping to find a more equation-esq answer, I found this stackoverflow post: https://stats.stackexchange.com/questions/181/how-to-choose-the-number-of-hidden-layers-and-nodes-in-a-feedforward-neural-netw

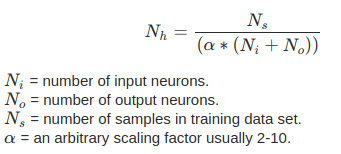

Within it is found this formula:

As the guide suggests, Ni is your amount of input variables, No is the output amount, Ns is our training set size, and a is an alpha value. From here, I usually pass in to layers an array of this output divided by 2, splitting the total hidden amount into two separate layers. Here is my function for doing so:

def calcHiddenLayer(data, alpha, numHiddenLayers):

tempData = data.train_ds

i, o = len(tempData.x.classes), len(tempData.y.classes)

io = i+o

return [(len(data.train_ds)//(alpha*(io)))//numHiddenLayers]*numHiddenLayers

For example, having an alpha value of 3 on the ADULT’s dataset, I achieved an accuracy between 84.5-85% within 5 epochs. If anyone has questions on this please do not hesitate, I had found success with this method and wanted to share it with others. This is absolutely not a be all tell all answer to what the best hidden-layer sizes actually are, but this is what I have found within my research.

If you wish to see the notebook, it is found here:

Thank you for reading!

Zach

Edit: I simplified the above function to only need to pass in the databunch, the alpha, and the number of layers

knowing how to calculate hidden layers has always been a dark art to me. Now I can at least bring a bit more clarity to the subject.

knowing how to calculate hidden layers has always been a dark art to me. Now I can at least bring a bit more clarity to the subject.