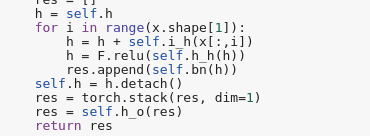

I found in NLP lectures that we are using x[:, i] as input instead of x[i] when we want to predict the next number, though, numbers are arranged in x[i] order. Why are we doing so?

Hi @rachel,

I was going through the chapter on seq2seq translation.

I wanted to request you to upload the final ‘questions_easy.csv’ (which is used to creating the final databunch) to the S3 datalake where fastai has hosted all the other datasets.

The problem is creating the datasets uses a lot of resources. I have tried running it on Colab and a couple of other machines but wasn’t able to. It would be tremendously helpful if the ready dataset is hosted somewhere.

Thanks in advance.

Thank you for the wonderful course as well!

Regards

A post was split to a new topic: Remote NLP Study Group Saturdays at 8 AM PST, starting 12/14/2019

@chatuur

Hi i downloaded a sentiment analysis dataset, a movie review dataset.

The structure of dataset is like this:

1st column: Movie ID

2nd column: movie review (text in english)

3rd column: ratings (numbers in decimal from 0 to 5)

so to predict the rating this has to be a tabluar model but for the model to interpret what the review means, another language model has to created and has to be used with the tabular data.

How do i do that ?

Thanks,

Hi @AjayStark, have a look at @quan.tran’s helpful post & github on mixed tabular & text, it worked well for me:

Hii, Thank you. I’ll Look into it

Hi @AjayStark ,

If the task is just sentiment analysis that can be done using only the text_classifier.

The example below is an example of if you have both text as well as tabular data.

For sentiment analysis there are two possible ways you can achieve this:

(a) Classification:

You can treat each rating as a separate category. So the categories you will have are 0,1,2,3,4,5. And you can train a simple classifier. Comparing this to the example from Jeremy’s course. In the course, there were two classes: pos and neg. You have 6 classes here.

(b) Regression:

Instead of treating the ratings as separate classes you can treat them as a continuum. This is covered in bits and pieces/ inspired from the fastai.collab module. First, let’s see what’s happening in the collab module. The concept of ratings can be adopted directly. If the ratings are between 0-5 we first normalize them to 0-1. The main difference comes here, instead of training using the CrossEntropy loss which is used for classification tasks, the MSE loss function is used. The same can be done here.

You can check out the implementation of the collab module that you need to look at here. Checkout how the y_range is being used for the normalization.

So you take a text_classifer module, normalize the ratings. One point to add here is Jeremy in his lectures mentions from practical experience when using ratings between 0-5 normalize them between 0-5.5 because it’s a bit tricky for the model to predict values at the extremities. Then use the MSELoss to train the module.

Hope this helps.

EDIT: I’ve never experimented with sentiment analysis. Thus I cannot say which approach will work better. In my opinion having been through Jeremy’s course I think MSE loss should work better. However expeirments should be relied upon rather than intuition.

Notice of Serendipity If You Want To Learn NLP!

Notice of Serendipity If You Want To Learn NLP!

Here’s a great opportunity if you plan to participate in the upcoming TWiML AI x Fastai Study Group for the course A Code-first Introduction to Natural Language Processing.

This Saturday, we will begin a three-week introduction to NLP from Lesson 12 of Deep Learning From the Foundations. Though not a required prerequisite, for the Fastai NLP course, these three sessions should provide a great introduction to and overview of NLP!

Time: 8:30 AM Pacific Time on Saturdays Nov 23rd, Nov 30th, and Dec 7th.

Place: In this Zoom chatroom.

Stay tuned on this channel for an announcement the details of this Saturday’s meeting!

The TWiML AI x Fastai NLP Study Group will meet Saturdays, beginning Dec. 14th, at 8:00 AM, a week after completion of the 3-part mini-review.

Issue: Preemptible GCP instances

Hi,

I’m a complete beginner to ML ( and programming, sort of), and am doing this course.

I am using GCP free credits, preemtible instance as per the instructions in the fastai documentation.

When I try to run train the IMDB full set after loading the wikitext model, the instances keeps

getting shut down at various stages of the training.

What happens to my model / data when I restart?

Is it possible to restart the training from where it was left off? I have been through this cycle about four times, and I am getting sisyphus nightmares.

Besides deleting this instance and starting a non-premtible one, is there a safe way to do this?

Thank you

Zero

The Deep Learning From the Foundations Study Group

will meet Saturday Nov 23rd, at 8:30 AM Pacific Time

Topic: Lesson 12, Text Data Preprocessing

Suggested Homework/preparation:

-

Watch the Lesson 12 video from 1:15:10 to 1:45:17

-

Familiarize with the notebook

12_text.ipynb

Join the Zoom Meeting when it’s time

To join via phone

Dial US: +1 669 900 6833 or +1 646 876 9923

Meeting ID: 832 034 584

The current meetup schedule is here

Sign up at Sam Charrington’s TWiML & AI x fast.ai Slack Group to receive meetup announcements via email

Hi @zerochi,

If you’re talking about Colab then there’s no way to retain the instance.

But what you can do is connect the colab notebook to your drive and save databunches/models which can then be reused.

Stackoverflow answer is here

Essentially it’s this:

from google.colab import drive drive.mount('/content/drive',force_remount=True)

And just another tip, change the runtime instacne to GPU. It’s not GPU by default.

Runtime → Change runtime type

Second, if you somehow manage to crash the notebook due to excessive consumption of RAM Colab automatically suggest upgrading your RAM to 25GB.

Yes, Colab is made for short experiments any long standing process that you leave on and close the notebook will eventually be stopped.

There are a couple of ‘tricks’ JS script to click on the Connect button which apparently hepls in keeoing it connected. But I can’t find it right now.

Regards

Trying to reproduce the NLP transfer learning from nn-imdb-more.ipynb on Google Colab.

After creating data_lm with my own data and checking the length of vocab.itos and vocab.stoi I’m getting 18744 and 73037 which is ok.

But after data_lm.save('lm_databunch') and data_lm = load_data(path, 'lm_databunch', bs=bs) and checking again vocab.itos and vocab.stoi – now I’m getting 18744 and 18742.

How is this possible? Or is this my mistake?

Thank you. I am not running this on colab, but on google cloud hosting. on google cloud I created a preemptible compute engine. I run jupyter notebooks on this compute engine.

My current understanding is that the data is gone, because when training is interrupted, that’s what happens.

Regards

ero

Hi @zerochi. Save your model every epoch (or whenever you like) during training with:

SaveModelCallback

Like this at least you don’t lose too much when your instance gets preempted/interrupted.

Thank you! this fixes my issue.

How can I use this Vietnamese notebook course to create next word predictor.

I have the .pth model and the vocab as .pkl in Serbian language created.

Now, I don’t plan to learn any more:

learn_lm = language_model_learner(data_lm,

AWD_LSTM,

pretrained_fnames=lm_fns,

drop_mult=1.0)

I would like to predict. Is there any notebook that shows how to do that?

I guess it is here. The predict method looks good.

During our discussion of the paper

The Curious Case of Neural Network Degeneration in connection with Lesson #14, we wondered

"How does the temperature parameter affect the distribution of word probabilities?"

I made a Jupyter notebook in which I empirically answer the above question and also discuss the decoding strategies mentioned in the paper.

Hello @pierreguillou , I think you can save your money for small tasks by using google colab workspace

target[:,-1])

: is all the ‘vertical’ rows (each row has bptt tokens)

-1 is the last token of each row

So for the whole batch (bs rows), the last word is the target. Think that when working with Tensors, most probably there is always a batch dimension (even if the batch has only one element).

Later in the lesson the model is meant to predict all the words expect the first one (with 1 > predict 2, with 1 and 2 > predict 3, …) but in this moment we were taking:

input : words 1…n-1

target: word n