Anda bisa menghubungi layanan customer service ajaib alpha di :0811721530 selain itu anda juga bisa menghubungi layanan bantuan 24 jam melalui whatsapp di 62811721530, anda juga bisa live chat agen melalui aplikasi ajaib dengan cara…

This is a forum wiki thread, so you all can edit this post to add/change/organize info to help make it better! To edit, click on the little pencil icon at the bottom of this post. Here’s a pic of what to look for:

<<< Wiki: Lesson 1 | Wiki: Lesson 3 >>>

General resource

Lesson resources

- Lesson video

- Lesson notes from @hiromi

- Lecture Notes from @timlee

- Annotated notebook (thanks to @amritv)

- Kaggle Kernel for lesson 2

- Skeleton notebook (fastai’s markdown with some code blocks removed to encourage coding along)

Video timelines for Lesson 2

-

00:01:01 Lesson 1 review, image classifier,

PATH structure for training, learning rate,

what are the four columns of numbers in “A Jupyter Widget” -

00:04:45 What is a Learning Rate (LR), LR Finder, mini-batch, ‘learn.sched.plot_lr()’ & ‘learn.sched.plot()’, ADAM optimizer intro

-

00:15:00 How to improve your model with more data,

avoid overfitting, use different data augmentation ‘aug_tfms=’ -

00:18:30 More questions on using Learning Rate Finder

-

00:24:10 Back to Data Augmentation (DA),

‘tfms=’ and ‘precompute=True’, visual examples of Layer detection and activation in pre-trained

networks like ImageNet. Difference between your own computer or AWS, and Crestle. -

00:29:10 Why use ‘learn.precompute=False’ for Data Augmentation, impact on Accuracy / Train Loss / Validation Loss

-

00:30:15 Why use ‘cycle_len=1’, learning rate annealing,

cosine annealing, Stochastic Gradient Descent (SGD) with Restart approach, Ensemble; “Jeremy’s superpower” -

00:40:35 Save your model weights with ‘learn.save()’ & ‘learn.load()’, the folders ‘tmp’ & ‘models’

-

00:42:45 Question on training a model “from scratch”

-

00:43:45 Fine-tuning and differential learning rate,

‘learn.unfreeze()’, ‘lr=np.array()’, ‘learn.fit(lr, 3, cycle_len=1, cycle_mult=2)’ -

00:55:30 Advanced questions: “why do smoother surfaces correlate to more generalized networks ?” and more.

-

01:05:30 “Is the Fast.ai library used in this course, on top of PyTorch, open-source ?” and why Fast.ai switched from Keras+TensorFlow to PyTorch, creating a high-level library on top.

PAUSE

-

01:11:45 Classification matrix ‘plot_confusion_matrix()’

-

01:13:45 Easy 8-steps to train a world-class image classifier

-

01:16:30 New demo with Dog_Breeds_Identification competition on Kaggle, download/import data from Kaggle with ‘kaggle-cli’, using CSV files with Pandas. ‘pd.read_csv()’, ‘df.pivot_table()’, ‘val_idxs = get_cv_idxs()’

-

01:29:15 Dog_Breeds initial model, image_size = 64,

CUDA Out Of Memory (OOM) error -

01:32:45 Undocumented Pro-Tip from Jeremy: train on a small size, then use ‘learn.set_data()’ with a larger data set (like 299 over 224 pixels)

-

01:36:15 Using Test Time Augmentation (‘learn.TTA()’) again

-

01:39:30 Question about the difference between

precompute=Trueand unfreeze. -

01:48:10 How to improve a model/notebook on Dog_Breeds: increase the image size and use a better architecture.

ResneXt (with an X) compared to Resnet. Warning for GPU users: the X version can 2-4 times memory, thus need to reduce Batch_Size to avoid OOM error -

01:53:00 Quick test on Amazon Satellite imagery competition on Kaggle, with multi-labels

-

01:56:30 Back to your hardware deep learning setup: Crestle vs Paperspace, and AWS who gave approx $200,000 of computing credits to Fast.ai Part1 V2.

More tips on setting up your AWS system as a Fast.ai student, Amazon Machine Image (AMI), ‘p2.xlarge’,

‘aws key pair’, ‘ssh-keygen’, ‘id_rsa.pub’, ‘import key pair’, ‘git pull’, ‘conda env update’, and how to shut down your $0.90 an hour with ‘Instance State => Stop’

AWS:

AWS fastami GPU Image Setup - detailed write-up

You can search for the AMI by name: fastai-part1v2-p2. You must choose one of the regions below (top right of AWS console to select region). Choose the region closest to where you are working from. The ID of the AMIs are:

- Oregon:

ami-8c4288f4 - Sydney:

ami-39ec055b - Mumbai:

ami-c53975aa - N. Virginia:

ami-c6ac1cbc - Ireland:

ami-b93c9ec0

The fastai repo is available in your home directory, in the fastai folder. The dogscats dataset is already there for you, and the data folder is linked to ~/data.

Accessing the repo

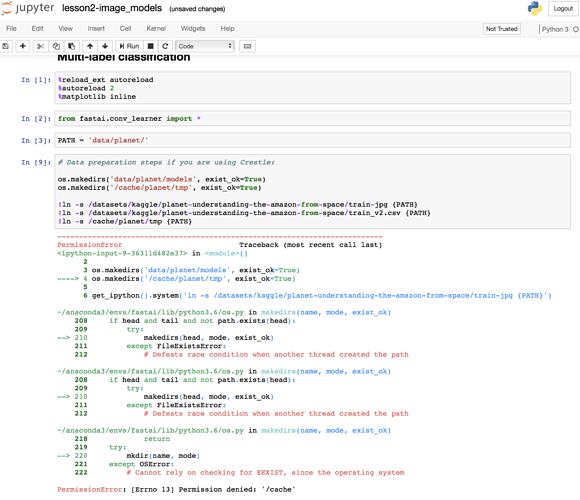

Crestle

If you created your Crestle account in the last week, you’ve already got the current repo in the data/fastai2/ folder.

If you created a Crestle account early (e.g. at the workshop) then the latest fastai repo wasn’t included in your account, so you’ll need to grab it (if you haven’t already) by doing:

git clone https://github.com/fastai/fastai.git

Updating the repo

Regardless of whether you’re using crestle, AWS, or something else, you need to update the repo to ensure you have the latest code, by typing (from the repo folder):

git pull

Anda bisa menghubungi layanan customer service ajaib alpha di :0811721530 selain itu anda juga bisa menghubungi layanan bantuan 24 jam melalui whatsapp di 62811721530, anda juga bisa live chat agen melalui aplikasi ajaib dengan cara…