Beginner here - I am running example code from the chapter 1 intro, and I am a bit confused why running the code below on my Mac (with Jupyter or VSCode kernel) provides an accuracy of 0.00000 and overall different train results than running the exact same code on kaggle. Here’s the code and screenshots of differences:

from fastai.tabular.all import *

path = untar_data(URLs.ADULT_SAMPLE)

dls = TabularDataLoaders.from_csv(path/'adult.csv', path=path, y_names="salary",

cat_names = ['workclass', 'education', 'marital-status', 'occupation',

'relationship', 'race'],

cont_names = ['age', 'fnlwgt', 'education-num'],

procs = [Categorify, FillMissing, Normalize])

learn = tabular_learner(dls, metrics=accuracy)

learn.fit_one_cycle(3)

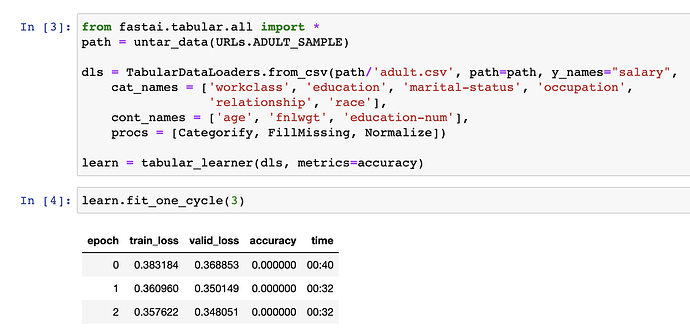

Local Jupyter

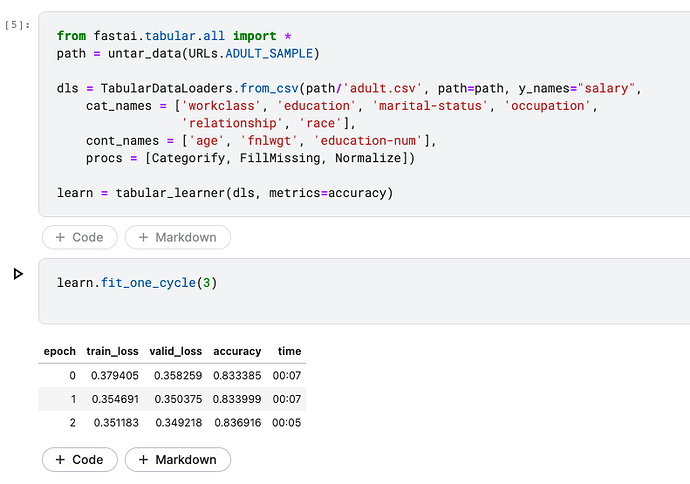

Kaggle

As you can see the accuracy of the training is 0.00 for all epochs locally.

What am I missing?