I am right now doing the part 1 course for deep learning from FastAI and I tried to replicate the DogsBreed Classifier on FastAI v1.

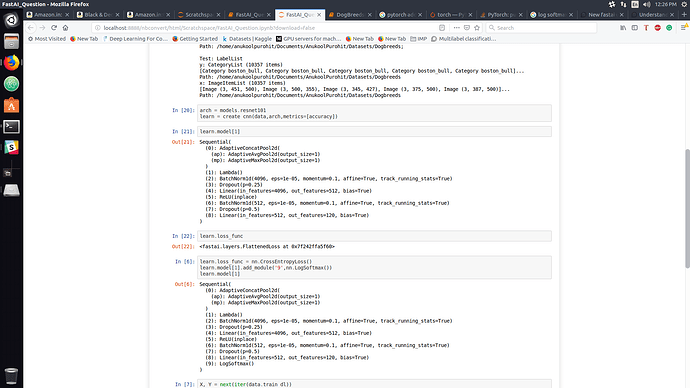

The first thing I noticed that the function create_cnn doesn’t automatically add the final softmax layer needed for multiclass classification. Secondly, the loss function by default is also FlatLoss.

So I edited the model and added an extra log softmax and a CE Loss function as the loss function.

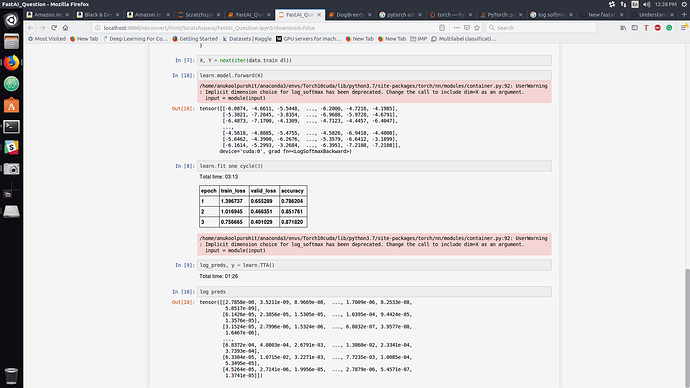

Now after this when I tried to see the output from the model it gives out output that is indeed negative, but the log_preds I get from TTA are now positive.

How can the output of Logsoftmax be positive? Is there a change in how the TTA works in FastAI v1. I may be asking a real noob question, but I would be thankful if I can be pointed in the right direction