Can’t find any explanation on that?

Percentage of total number of epochs when learning rate rises during one cycle.

Sorry, I still confused that one cycle in the new API only runs one epoch. How the percentage of total number of epochs works? Can you give a example? If learn.fit_one_cycle(10, slice(1e-4,1e-3,1e-2), pct_start=0.05)??

Ok, strictly correct answer would be percentage of iterations, so you can have lr both increase and decrease during same epoch. In your example, say, you have 100 iterations per epoch, then for half an epoch (0.05 * (10 * 100) = 50) lr will rise, then slowly decrease.

Thanks a lot~~~

Thanks for this explanation … so essentially, it is the percentage of overall iterations where the LR is increasing, correct?

So, given the default of 0.3, it means that your LR is going up for 30% of your iterations and then decreasing over the last 70%.

Is that a correct summation of what is happening?

Yes, I think that’s correct.

You can verify that by changing its value and check:

learn.recorder.plot_lr()

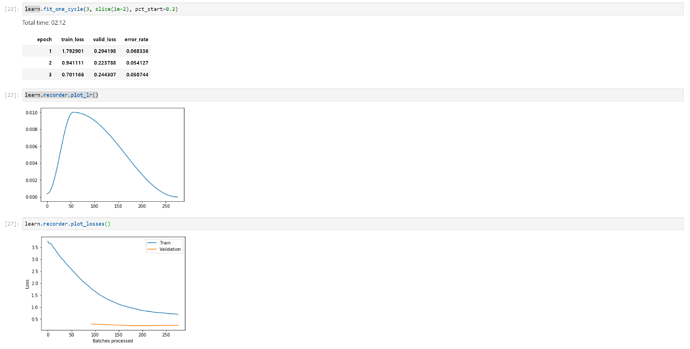

For example if pct_start = 0.2

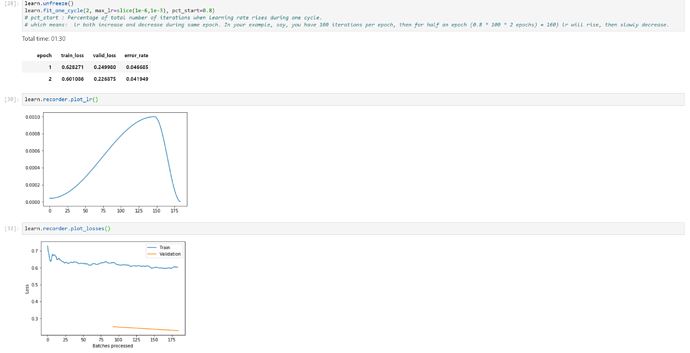

And if you change it to pct_start = 0.8

Just one question.

Suppose for some layer my initial learning rate is lr1 and after an epoch it changes to lr2, now will the initial learning rate for the next epoch for that layer be lr1 or lr2? Will the codes below give different or same results?

learn.fit_one_cycle(2, slice(lr1))

learn.fit_one_cycle(1, slice(lr1))

learn.fit_one_cycle(1, slice(lr1))Thanks! Well explained!

In the code example it seems you are only calling lr1? (and not lr2, which was mentioned)

But the code example would give different results anyway. Because the first line calls fit_one_cycle once for a longer duration (one long iteration), and the last two lines does the full cycle twice for a shorter duration. (If you plot it you’ll see the difference)

Thanks, btw lr2 refers to the learning rate after one epoch is completed for that layer, so there is no need for that.