I am a novice in NLP. Recently I need to get feature from a sentence. I notice there are different ways to get these features from LSTM or RNN.

- Get the last output from the network.

- Get the last hidden from the network.

- Get the output that before padding input.

Since the sentence is variable length, these features are different from each other. Method 1 and Method may contain some padding input. I wonder which one is the best to extract sentence’s features.

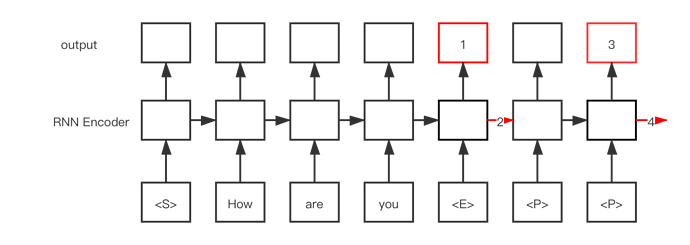

in a seq2seq model like awdlstm, you have 2 parts, the enconder and the decoder

It usually is something like this: Encoder -> hiddenstate that goes to Decoder as h0 -> Decoder

You usually want the the last hiddentstate of the enconder as its the knowledge of the seq that you feed the model to

Thank you! Let me explain what you mean with an example. In the following picture, we often

use the No.4 tensor as our encoder features. Am I right?

yes exactly

I doesn’t have to be a lstm, It could be an Auto-encoder or the architecture of your choice