If I don’t set requires_grad_(False)

#STEP 1 initizle parameters

def init_params(size, variance=1.0): return (torch.randn(size)*variance).requires_grad_(False)

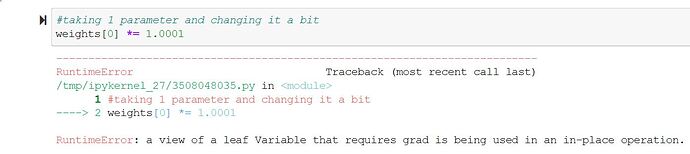

I get the following error when I leave

require_grad_()

anyone could shed some light on this?