In the code of lesson7-superres-gan.ipynb, we have:

switcher = partial(AdaptiveGANSwitcher, critic_thresh=0.65)

learn = GANLearner.from_learners(learn_gen, learn_crit, weights_gen=(1.,50.), show_img=False, switcher=switcher,

opt_func=partial(optim.Adam, betas=(0.,0.99)), wd=wd)

learn.callback_fns.append(partial(GANDiscriminativeLR, mult_lr=5.))

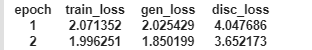

in the example notebook it outputs with train_loss, gen_loss, disc_loss:

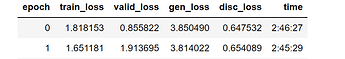

for some reason in mine (different dataset) it also outputs valid_loss:

I’m curious also how I control what info it outputs, but my main question is - what do training loss and validation loss mean in a gan? As far as I understand it, the gen_loss is the generator’s loss function, which would be how well it’s fooling the discriminator. Disc_loss is the discriminator’s loss function, which would be how well it can tell the difference between a real image and a generated image.

What are train_loss and valid_loss? I know it’s ‘training loss’ and ‘validation loss’, but what loss function is it measuring, and what part of the model is being measured?