Hi,

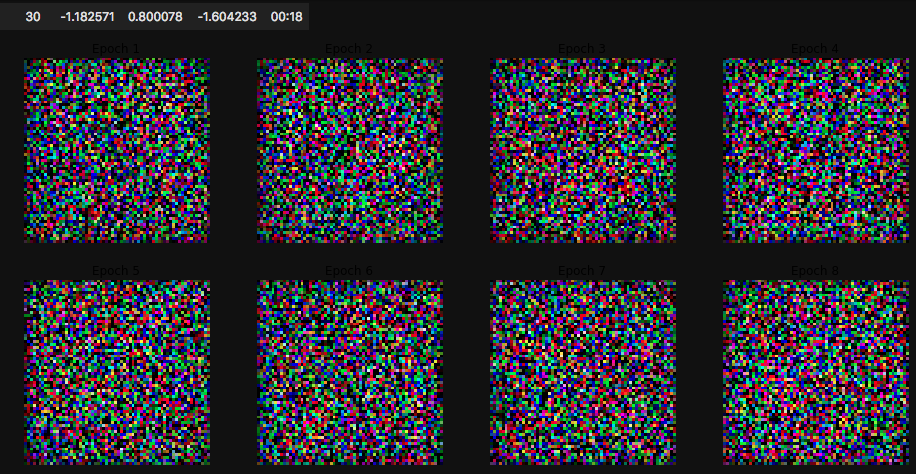

I am doing a small experiment. I am trying to use WGAN example from lesson 7. I created my own dataset for this purpose. I am using the following code snippet, but the outputs for every epoch are the same, noises.

def get_data(bs, size):

return (GANItemList.from_folder(path, noise_sz=100)

.no_split()

.label_from_func(noop)

.transform(tfms=[[crop_pad(size=size, row_pct=(0,1), col_pct=(0,1))], []], size=size, tfm_y=True)

.databunch(bs=bs)

.normalize(stats = [torch.tensor([0.5,0.5,0.5]), torch.tensor([0.5,0.5,0.5])], do_x=False, do_y=True))

data = get_data(4, 64)

generator = basic_generator(in_size=64, n_channels=3, n_extra_layers=1)

critic = basic_critic(in_size=64, n_channels=3, n_extra_layers=1)

learn = GANLearner.wgan(data, generator, critic, switch_eval=False, opt_func = partial(optim.Adam, betas = (0.,0.99)), wd=0.)

learn.fit(30,2e-4)

output:

|epoch|train_loss|gen_loss|disc_loss|time|

|---|---|---|---|---|

|1|-0.490096|0.378111|-0.666829|00:13|

|2|-0.835214|0.540004|-1.113979|00:15|

|3|-1.000573|0.620919|-1.334438|00:17|

....

|27|-1.182753|0.798620|-1.604142|00:17|

|28|-1.182750|0.799152|-1.604292|00:19|

|29|-1.182985|0.799610|-1.604540|00:18|

|30|-1.182571|0.800078|-1.604233|00:18|

What do you think what could be the cause of these noises? Do I need to tweak some parameters? or create bigger dataset?

Any help would be greatly appreciated.