Hi all,

I’m experimenting with fine-tuning language models on a specific domain with further text classifications task, specifically with the Transformer architecture.

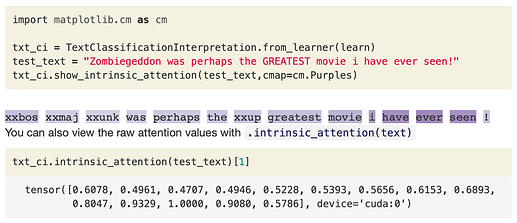

How to output a nice visualisation of the attention with Transformer, similar to:

In docs it said: Provides an interpretation of classification based on input sensitivity. This was designed for AWD-LSTM only for the moment, because Transformer already has its own attentional model.

Thanks!