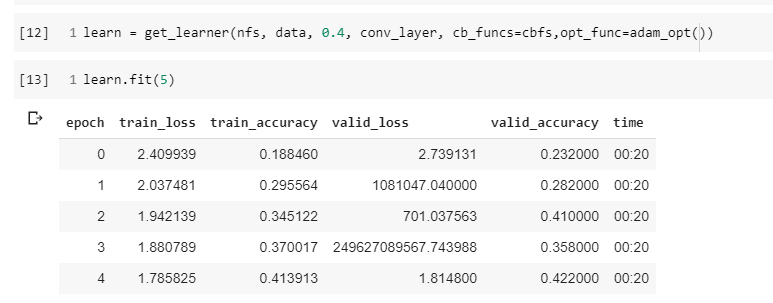

hi! when playing around with the lessons notebooks I noticed that using adam leads to very unstable training and high losses in notebook 09c, see e.g. the image below

As far as I can see thing work fine in notebook 09 (where adam is first introduced) and also in 09b.

The only change I did in 09c before running the notebook was to change the optimizer to adam in the last few lines of the notebook i.e. to change this

learn = get_learner(nfs, data, 0.4, conv_layer, cb_funcs=cbfs)

into this

learn = get_learner(nfs, data, 0.4, conv_layer, cb_funcs=cbfs,opt_func=adam_opt())

has anyone experienced the same issue?