Hi all,

I’ve added a tutorial showing how to use a transformers pretrained model in fastai, using the mid-level API. Hope you find it useful!

Hi all,

I’ve added a tutorial showing how to use a transformers pretrained model in fastai, using the mid-level API. Hope you find it useful!

This is really cool! I was actually using their library for NLP and am happy to know I can now benefit from fastai functions.

By the way, they have the AutoModels functions which I believe are used to call the right class based on model name (gpt2, bert, distilbert…).

Would it make sense to have a transformer_learner and special datablock to accomplish all you did in the tutorial (call right transforms, add the required callback…)?

Yes, ultimately, probably in a fastai extension since it would require a new dependency. I haven’t played around with the transformers library enough to be sure this approach will work for every tuple model / problem type however.

Based in your tutorial I am trying to use It for creating a DataLoader for MaskRCNN:

class MaskRCNN(dict):

@classmethod

def create(cls, dictionary):

return cls(dict({x:dictionary[x] for x in dictionary.keys()}))

def show(self, ctx=None, **kwargs):

dictionary = self

boxes = dictionary["boxes"]

labels = dictionary["labels"]

masks = dictionary["masks"]

result = masks

return show_image(result, ctx=ctx, **kwargs)

def MaskRCNNBlock():

return TransformBlock(type_tfms=MaskRCNN.create, batch_tfms=IntToFloatTensor)

def get_bbox(o):

label_path = get_y_fn(o)

mask=PILMask.create(label_path)

pos = np.where(mask)

xmin = np.min(pos[1])

xmax = np.max(pos[1])

ymin = np.min(pos[0])

ymax = np.max(pos[0])

return TensorBBox.create([xmin, ymin, xmax, ymax])

def get_bbox_label(o):

return TensorCategory([1])

def get_mask(o):

label_path = get_y_fn(o)

mask=PILMask.create(label_path)

mask=image2tensor(mask)

return TensorMask(mask)

def get_dict(o):

return {"boxes": get_bbox(o), "labels": get_bbox_label(o),"masks": get_mask(o)}

getters = [lambda o: o, get_dict]

maskrccnnDataBlock = DataBlock(

blocks=(ImageBlock, MaskRCNNBlock),

get_items=partial(get_image_files,folders=[manual_name]),

getters=getters,

splitter=RandomSplitter(valid_pct=0.1,seed=2020),

item_tfms=Resize((size,size)),

batch_tfms=Normalize.from_stats(*imagenet_stats)

)

maskrccnnDataBlock.summary(path_images)

dls = maskrccnnDataBlock.dataloaders(path_images,bs=bs)

dls.show_batch()

However, show_batch is not working owing to the fact that Mask is not getting resized to the same size as Image

You need to implement transforms for your MaskedRCNN since it’s not of a fastai type.

Is it possible to make Mask act as a MaskBlock? In that case, transforms will be applied no?

Sorry still confuse how this forum works, where can i get the tutorial?

Here you go!:

http://dev.fast.ai/tutorial.transformers

I decided to go withouth them.

I tried to use this Dataloader with a Learner. I am getting a very strange error:

Traceback (most recent call last):

Traceback (most recent call last):

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

Traceback (most recent call last):

Traceback (most recent call last):

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/reduction.py", line 51, in dumps

cls(buf, protocol).dump(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/reduction.py", line 51, in dumps

cls(buf, protocol).dump(obj)

Traceback (most recent call last):

Traceback (most recent call last):

Traceback (most recent call last):

_pickle.PicklingError: Can't pickle <class '__main__.MaskRCNN'>: it's not the same object as __main__.MaskRCNN

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

Traceback (most recent call last):

_pickle.PicklingError: Can't pickle <class '__main__.MaskRCNN'>: it's not the same object as __main__.MaskRCNN

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/reduction.py", line 51, in dumps

cls(buf, protocol).dump(obj)

Traceback (most recent call last):

Traceback (most recent call last):

Traceback (most recent call last):

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/reduction.py", line 51, in dumps

cls(buf, protocol).dump(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/reduction.py", line 51, in dumps

cls(buf, protocol).dump(obj)

File "/home/david/anaconda3/envs/seg/lib/python3.7/multiprocessing/queues.py", line 236, in _feed

obj = _ForkingPickler.dumps(obj)

You need to restart your notebook. This errors comes from the multiprocessing so setting num_workers=0 when prototyping will get your rid of it.

If restarting is not enough, you need to put MaskRCNN in a separate module that you import.

num_workers = 0 solve it. Thank you

Storing in a different module is for solving multiprocessing?

In addition, I don’t know how to override the code where metrics input is set. I would like just to use the mask that returns MaskRCNN model for computing the metrics.

Yes indeed.

For the metrics, you need to write your functions that delegates the mask part to the function you want.

My english is bad, I don’t understand what you mean by write your functions that delegates.

The metrics array that you pass into the Learner is getting executed in a callback?

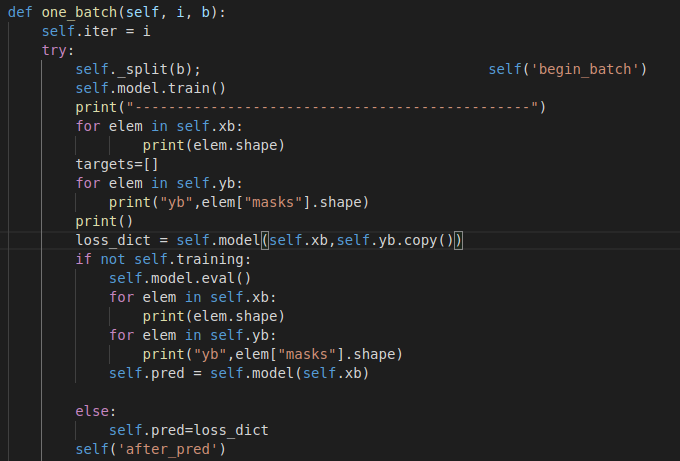

It’s difficult to me to find the correct place where to make those changes. Editing one_batch with a Learner subclass was pretty easy!

Learner was working with that Loader.

learn.lr_find() got up to 10% and throw a new error related with Dataloader. Likes like he is trying to collate Images. However, I don’t want it to do it. It is being made in the first layer of the model.

It is the error:

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-28-d81c6bd29d71> in <module>

----> 1 learn.lr_find()

~/anaconda3/envs/seg/lib/python3.7/site-packages/fastai2/callback/schedule.py in lr_find(self, start_lr, end_lr, num_it, stop_div, show_plot, suggestions)

226 n_epoch = num_it//len(self.dls.train) + 1

227 cb=LRFinder(start_lr=start_lr, end_lr=end_lr, num_it=num_it, stop_div=stop_div)

--> 228 with self.no_logging(): self.fit(n_epoch, cbs=cb)

229 if show_plot: self.recorder.plot_lr_find()

230 if suggestions:

~/anaconda3/envs/seg/lib/python3.7/site-packages/fastcore/utils.py in _f(*args, **kwargs)

428 init_args.update(log)

429 setattr(inst, 'init_args', init_args)

--> 430 return inst if to_return else f(*args, **kwargs)

431 return _f

432

~/Documents/seg/seg/models/archs/mask_rcnn.py in fit(self, n_epoch, lr, wd, cbs, reset_opt)

114 try:

115 self.epoch=epoch; self('begin_epoch')

--> 116 self._do_epoch_train()

117 self._do_epoch_validate()

118 except CancelEpochException: self('after_cancel_epoch')

~/Documents/seg/seg/models/archs/mask_rcnn.py in _do_epoch_train(self)

89 try:

90 self.dl = self.dls.train; self('begin_train')

---> 91 self.all_batches()

92 except CancelTrainException: self('after_cancel_train')

93 finally: self('after_train')

~/Documents/seg/seg/models/archs/mask_rcnn.py in all_batches(self)

60 def all_batches(self):

61 self.n_iter = len(self.dl)

---> 62 for o in enumerate(self.dl): self.one_batch(*o)

63

64 def one_batch(self, i, b):

~/anaconda3/envs/seg/lib/python3.7/site-packages/fastai2/data/load.py in __iter__(self)

96 self.randomize()

97 self.before_iter()

---> 98 for b in _loaders[self.fake_l.num_workers==0](self.fake_l):

99 if self.device is not None: b = to_device(b, self.device)

100 yield self.after_batch(b)

~/anaconda3/envs/seg/lib/python3.7/site-packages/torch/utils/data/dataloader.py in __next__(self)

343

344 def __next__(self):

--> 345 data = self._next_data()

346 self._num_yielded += 1

347 if self._dataset_kind == _DatasetKind.Iterable and \

~/anaconda3/envs/seg/lib/python3.7/site-packages/torch/utils/data/dataloader.py in _next_data(self)

951 if len(self._task_info[self._rcvd_idx]) == 2:

952 data = self._task_info.pop(self._rcvd_idx)[1]

--> 953 return self._process_data(data)

954

955 assert not self._shutdown and self._tasks_outstanding > 0

~/anaconda3/envs/seg/lib/python3.7/site-packages/torch/utils/data/dataloader.py in _process_data(self, data)

994 self._try_put_index()

995 if isinstance(data, ExceptionWrapper):

--> 996 data.reraise()

997 return data

998

~/anaconda3/envs/seg/lib/python3.7/site-packages/torch/_utils.py in reraise(self)

393 # (https://bugs.python.org/issue2651), so we work around it.

394 msg = KeyErrorMessage(msg)

--> 395 raise self.exc_type(msg)

RuntimeError: Caught RuntimeError in DataLoader worker process 10.

Original Traceback (most recent call last):

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/torch/utils/data/_utils/worker.py", line 178, in _worker_loop

data = fetcher.fetch(index)

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/torch/utils/data/_utils/fetch.py", line 34, in fetch

data = next(self.dataset_iter)

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/fastai2/data/load.py", line 107, in create_batches

yield from map(self.do_batch, self.chunkify(res))

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/fastai2/data/load.py", line 128, in do_batch

def do_batch(self, b): return self.retain(self.create_batch(self.before_batch(b)), b)

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/fastai2/data/load.py", line 127, in create_batch

def create_batch(self, b): return (fa_collate,fa_convert)[self.prebatched](b)

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/fastai2/data/load.py", line 46, in fa_collate

else type(t[0])([fa_collate(s) for s in zip(*t)]) if isinstance(b, Sequence)

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/fastai2/data/load.py", line 46, in <listcomp>

else type(t[0])([fa_collate(s) for s in zip(*t)]) if isinstance(b, Sequence)

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/fastai2/data/load.py", line 45, in fa_collate

return (default_collate(t) if isinstance(b, _collate_types)

File "/home/david/anaconda3/envs/seg/lib/python3.7/site-packages/torch/utils/data/_utils/collate.py", line 55, in default_collate

return torch.stack(batch, 0, out=out)

RuntimeError: stack expects each tensor to be equal size, but got [3, 966, 1296] at entry 0 and [3, 1004, 1002] at entry 1

Very cool. Where can I find the tutorial, please?

Thank you, David.

If I created a sub class of TfmdDL where create_batch is just a return b.

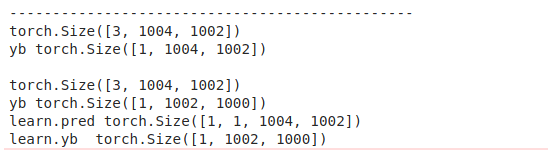

However, at training when validation is done I found something very strange:

Looks like that after passing yb to the model, it gets other values. So, my Metrics functions are just failing owing to the fact that predictions masks doesn have same shape.

[ EDIT - 07/01/2020 ] I deleted the content of this post as it was redundant with this one :

fastai v2 and Transformers | Problems not solved with DDP

Hi,

Is it possible to replace the LSTM layer with the transformer model for video action recognition? I am currently working on medical image (3d as a video) binary classification.

Currently, I found Thomas’ implementations nice (ConvLSTM - CNN + LSTM; his transformer model is not that good as he admits), but I wanted to explore whether someone has an alternative, since my test error is still too large!

Thanks.

Sam