I am having issues while loading the learner for my new data loader. So here’s the code story:

I have trained my retinanet model on a MIDOG dataset (here)which is an image dataset having 3 class labels 0-background 1 -hard negative 2- Hard positive, my model training is done and now I want the same model to be trained from my own dataset which has images and annotations in the same format (image format changed but that’s not an issue as openslide is able to load it perfectly), but the change here is that my own dataset has only 2 classes : 0 -background and 1: Hard positive, so when Itry to load the model using state_dict it throws me an error, The model was saved using torch.save_dict(PATH).

I saved this model using torch .save as follows:

torch.save(learn.model.state_dict(),PATH)

When I try to load this model for new data it shows an error:

learn.model.load_state_dict(torch.load(PATH))

Error:

/usr/local/lib/python3.7/dist-packages/torch/nn/modules/module.py in load_state_dict(self, state_dict, strict)

1496 if len(error_msgs) > 0:

1497 raise RuntimeError('Error(s) in loading state_dict for {}:\n\t{}'.format(

-> 1498 self.__class__.__name__, "\n\t".join(error_msgs)))

1499 return _incompatibleKeys(missing_keys, unexpected_keys)

1500

RuntimeError: Error(s) in loading state_dict for RetinaNet:

size mismatch for classifier.3.weight: copying a param with shape torch.Size([3, 128, 3, 3]) from checkpoint, the shape in current model is torch.Size([2, 128, 3, 3]).

size mismatch for classifier.3.bias: copying a param with shape torch.Size([3]) from chec

Also, I tried a different approach for loading it using saving the whole model with torch.save(learner.model,PATH) and then loading it as follows:

learn.model=torch.load('PATH') #map_location=torch.device('cpu') without GPU

And now it loads without error, but then I try to do learn.fit it throws me index error:

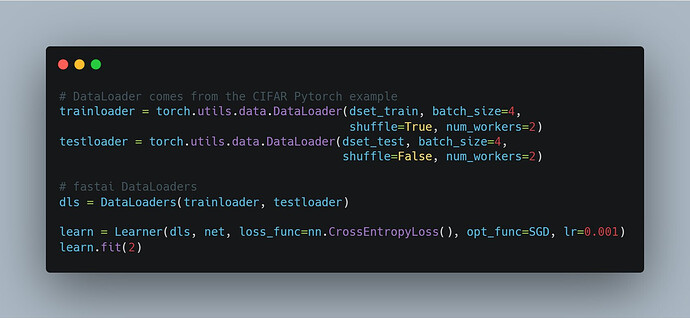

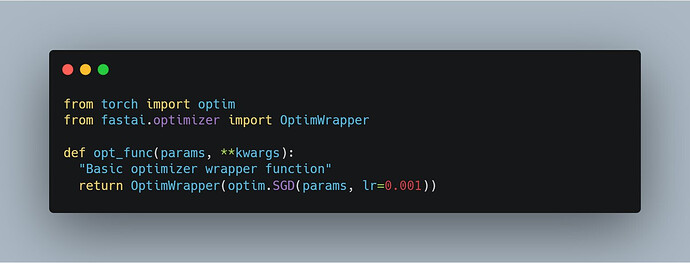

THE CODE

max_learning_rate = 1e-3

cyc_len = 50

batch_size=16

learn.fit_one_cycle(cyc_len, max_learning_rate,callbacks=[SaveModelCallback(learn, monitor='train_loss',name='best_train')])

ERROR:

epoch train_loss valid_loss pascal_voc_metric BBloss focal_loss AP-Mitosis time

0.00% [0/3 00:00<?]

---------------------------------------------------------------------------

indexError Traceback (most recent call last)

<ipython-input-29-eb47d817d7bd> in <module>

4 print("\n Starting Training with n=",cyc_len,"epochs with batch_size=",batch_size,"\n")

5 learn.fit_one_cycle(cyc_len, max_learning_rate,callbacks=[SaveModelCallback(learn, monitor='train_loss',

----> 6 name='best_train_loss_bs64_GC_1500')])

7 frames

/usr/local/lib/python3.7/dist-packages/fastai/train.py in fit_one_cycle(learn, cyc_len, max_lr, moms, div_factor, pct_start, final_div, wd, callbacks, tot_epochs, start_epoch)

21 callbacks.append(OneCycleScheduler(learn, max_lr, moms=moms, div_factor=div_factor, pct_start=pct_start,

22 final_div=final_div, tot_epochs=tot_epochs, start_epoch=start_epoch))

---> 23 learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

24

25 def fit_fc(learn:Learner, tot_epochs:int=1, lr:float=defaults.lr, moms:Tuple[float,float]=(0.95,0.85), start_pct:float=0.72,

/usr/local/lib/python3.7/dist-packages/fastai/basic_train.py in fit(self, epochs, lr, wd, callbacks)

198 else: self.opt.lr,self.opt.wd = lr,wd

199 callbacks = [cb(self) for cb in self.callback_fns + listify(defaults.extra_callback_fns)] + listify(callbacks)

--> 200 fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

201

202 def create_opt(self, lr:Floats, wd:Floats=0.)->None:

/usr/local/lib/python3.7/dist-packages/fastai/basic_train.py in fit(epochs, learn, callbacks, metrics)

104 if not cb_handler.skip_validate and not learn.data.empty_val:

105 val_loss = validate(learn.model, learn.data.valid_dl, loss_func=learn.loss_func,

--> 106 cb_handler=cb_handler, pbar=pbar)

107 else: val_loss=None

108 if cb_handler.on_epoch_end(val_loss): break

/usr/local/lib/python3.7/dist-packages/fastai/basic_train.py in validate(model, dl, loss_func, cb_handler, pbar, average, n_batch)

61 if not is_listy(yb): yb = [yb]

62 nums.append(first_el(yb).shape[0])

---> 63 if cb_handler and cb_handler.on_batch_end(val_losses[-1]): break

64 if n_batch and (len(nums)>=n_batch): break

65 nums = np.array(nums, dtype=np.float32)

/usr/local/lib/python3.7/dist-packages/fastai/callback.py in on_batch_end(self, loss)

306 "Handle end of processing one batch with `loss`."

307 self.state_dict['last_loss'] = loss

--> 308 self('batch_end', call_mets = not self.state_dict['train'])

309 if self.state_dict['train']:

310 self.state_dict['iteration'] += 1

/usr/local/lib/python3.7/dist-packages/fastai/callback.py in __call__(self, cb_name, call_mets, **kwargs)

248 "Call through to all of the `CallbakHandler` functions."

249 if call_mets:

--> 250 for met in self.metrics: self._call_and_update(met, cb_name, **kwargs)

251 for cb in self.callbacks: self._call_and_update(cb, cb_name, **kwargs)

252

/usr/local/lib/python3.7/dist-packages/fastai/callback.py in _call_and_update(self, cb, cb_name, **kwargs)

239 def _call_and_update(self, cb, cb_name, **kwargs)->None:

240 "Call `cb_name` on `cb` and update the inner state."

--> 241 new = ifnone(getattr(cb, f'on_{cb_name}')(**self.state_dict, **kwargs), dict())

242 for k,v in new.items():

243 if k not in self.state_dict:

/usr/local/lib/python3.7/dist-packages/object_detection_fastai/callbacks/callbacks.py in on_batch_end(self, last_output, last_target, **kwargs)

153 num_boxes = len(bbox_gt) * 3

154 for box, cla, scor in list(zip(bbox_pred, preds, scores))[:num_boxes]:

--> 155 temp = BoundingBox(imageName=str(self.imageCounter), classid=self.metric_names_original[cla], x=box[0], y=box[1],

156 w=box[2], h=box[3], typeCoordinates=CoordinatesType.Absolute, classConfidence=scor,

157 bbType=BBType.Detected, format=BBFormat.XYWH, imgSize=(self.size, self.size))

indexError: list index out of range

NOTE: The dataset is smaller as compared to the previous dataset on which the model is trained (nearly 100 images, 3k images before), so because of that I have also kept the batch size small :16).

Please guide me on how I can train my already trained model on my new dataset, should I save the image data bunch and try reloading the dataset on the older notebook where the MIDOG dataset was trained, and see if it can be reloaded, or is there any way to load the learner so that I can start my training.

INFERENCE WORKS FINE ON THE CURRENT MODEL WITH THE NEW DATASET

Any kind of resource: notebook, or code snippet, would be beneficial.

Thanks for this wonderful forum.

Harshit