Hi guys,

I’ve been trying to used mixed precision training on the planet dataset, it all seems to run fine but the loss and accuracy results I’m getting are quite different when compared to not using mixed training:

#load trained model#acc_02 is a partial new function that calls accuracy_thresh with the parameter thresh=0.2#learn = cnn_learner(data, arch, metrics=[acc_02, f_score]).to_fp16() #mixed precision

#We use the LR Finder to pick a good learning rate

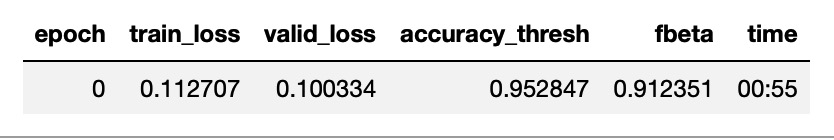

Results without mixed precision

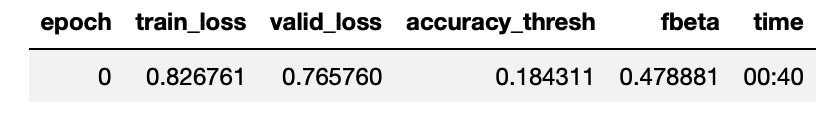

Results with mixed precision

Has anyone com across a similar issue?

ptrampert

March 26, 2019, 8:12am

2

Have you also tried different Notebooks?

I tested fp16 for the dog breeds and camvid and there was no real difference. Maybe you can try these as well?

Hi Patrick,

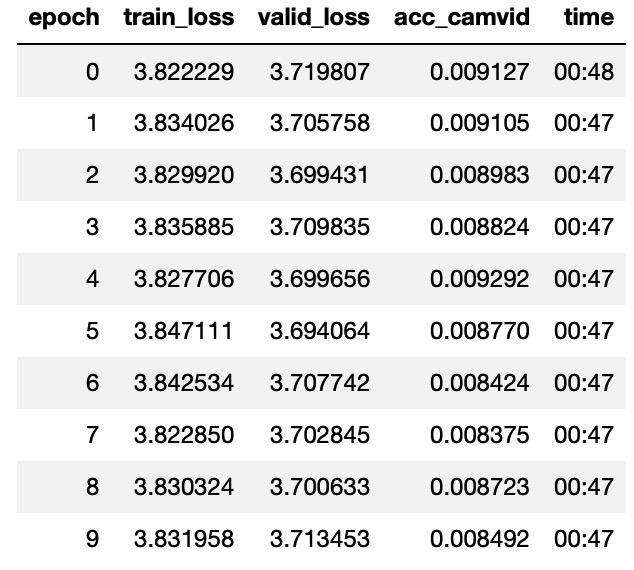

I’ve just tested fp16 on the camvid notebook and the fp16 results are totally different:With fp16

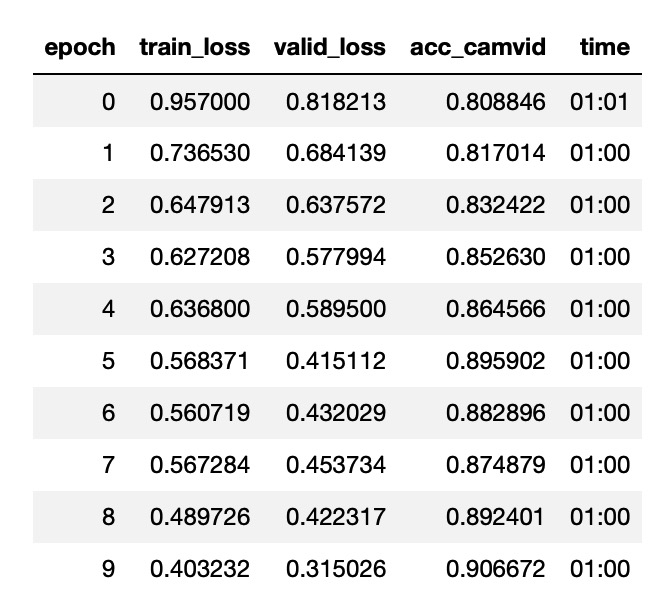

Without fp16

Here is the output of show_install, hope someone can help…

=== Software ===

python : 3.6.8

fastai : 1.0.50.post1

fastprogress : 0.1.20

torch : 1.0.1.post2

nvidia driver : 418.43

torch cuda : 10.0.130 / is available

torch cudnn : 7402 / is enabled

=== Hardware ===

nvidia gpus : 1

torch devices : 1

- gpu0 : 7949MB | GeForce RTX 2070

=== Environment ===

platform : Linux-4.18.0-16-generic-x86_64-with-debian-buster-sid

distro : Ubuntu 18.04 bionic

conda env : Unknown

python : /home/hbenitez/anaconda3/envs/fastai/bin/python

sys.path :

/home/hbenitez/anaconda3/envs/fastai/lib/python36.zip

/home/hbenitez/anaconda3/envs/fastai/lib/python3.6

/home/hbenitez/anaconda3/envs/fastai/lib/python3.6/lib-dynload

/home/hbenitez/anaconda3/envs/fastai/lib/python3.6/site-packages

/home/hbenitez/anaconda3/envs/fastai/lib/python3.6/site-packages/IPython/extensions

Tue Mar 26 21:53:54 2019

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 418.43 Driver Version: 418.43 CUDA Version: 10.1 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 GeForce RTX 2070 Off | 00000000:1F:00.0 Off | N/A |

| 17% 36C P8 23W / 175W | 4509MiB / 7949MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| 0 18763 C ...enitez/anaconda3/envs/fastai/bin/python 4499MiB |

+-----------------------------------------------------------------------------+

ptrampert

March 26, 2019, 12:27pm

5

Here is my show_install, there are some differences for sure:

=== Software ===

=== Hardware ===

gpu0 : 32480MB | Tesla V100-PCIE-32GB

gpu1 : 32480MB | Tesla V100-PCIE-32GB

=== Environment ===#21-Ubuntu SMP Tue Apr 24 06:16:15 UTC 2018

I also rerun the two options:

With to_fp16():

epoch

train_loss

valid_loss

acc_camvid

time

0

0.981239

1.032274

0.750549

01:48

1

0.901010

0.648565

0.842442

01:34

2

0.669481

0.477927

0.870625

01:34

Without:

epoch

train_loss

valid_loss

acc_camvid

time

0

0.939555

0.714685

0.828325

02:25

1

0.811918

0.644321

0.841052

02:13

2

0.656373

0.475579

0.883946

02:14