Hi @tcapelle ,

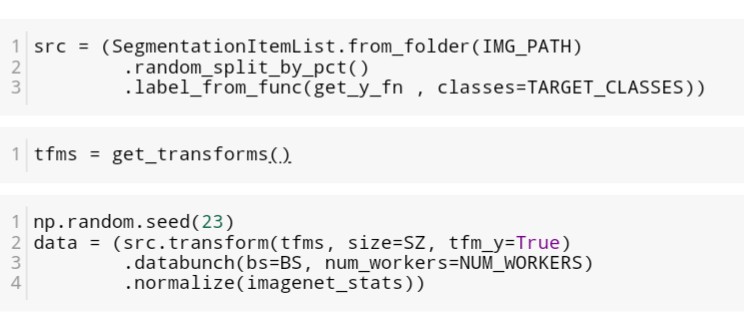

This is what I did:

Where my classes are : [‘background’, ‘person’]

If you like you can join the thread I opened on image segmentation: Image Segmentation on COCO dataset - summary, questions and suggestions

Maybe this can help both of us and also other people