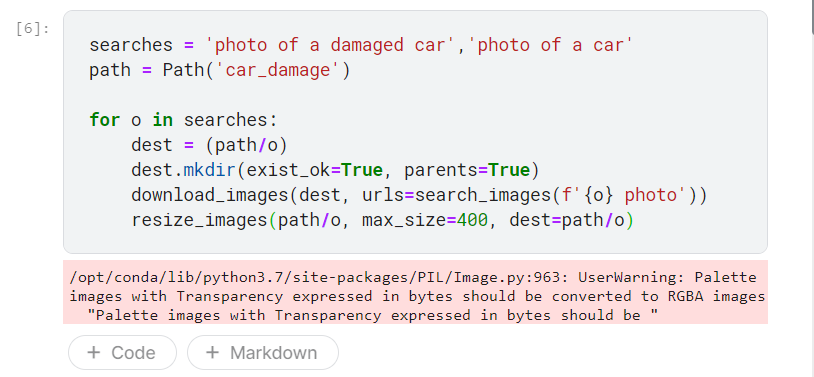

- I understood your first response that you have to convert your image to to RGBA. But I’m not sure how do I update my for loop.

From what I understand, the for loop iterates over the search variable and downloads 200 examples of each. Do I change the image to RGBA before the resize part? I dunno…

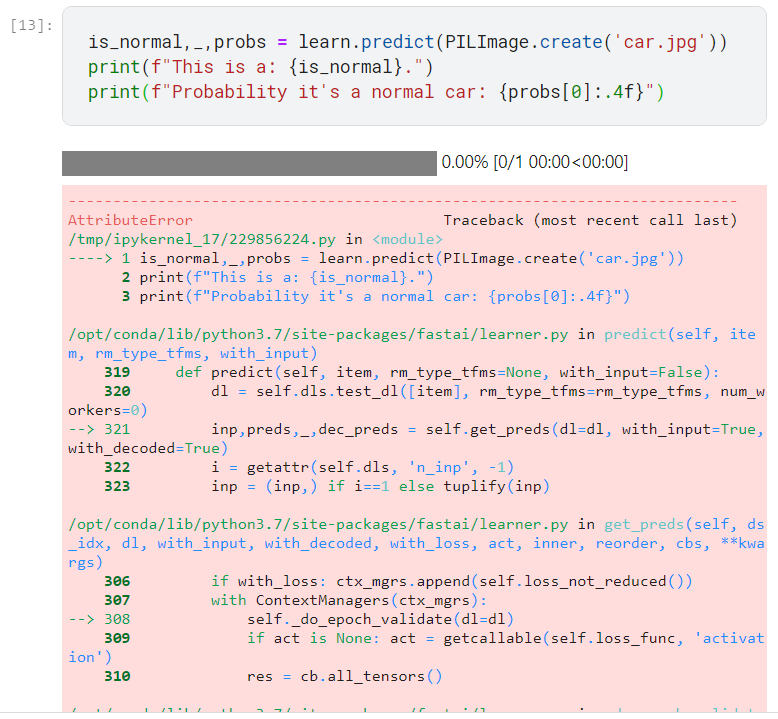

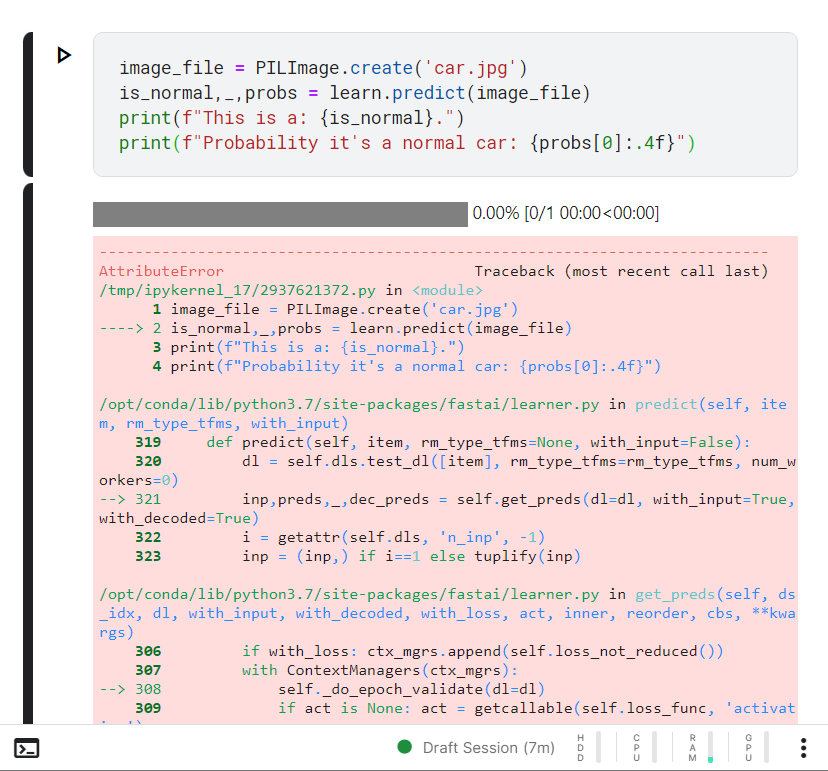

- I’ve tried storing the image in a variable and then passing it to the predict method. It still kinda gives me the same error.

Here’s the full error message:

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

/tmp/ipykernel_17/2937621372.py in <module>

1 image_file = PILImage.create('car.jpg')

----> 2 is_normal,_,probs = learn.predict(image_file)

3 print(f"This is a: {is_normal}.")

4 print(f"Probability it's a normal car: {probs[0]:.4f}")

/opt/conda/lib/python3.7/site-packages/fastai/learner.py in predict(self, item, rm_type_tfms, with_input)

319 def predict(self, item, rm_type_tfms=None, with_input=False):

320 dl = self.dls.test_dl([item], rm_type_tfms=rm_type_tfms, num_workers=0)

--> 321 inp,preds,_,dec_preds = self.get_preds(dl=dl, with_input=True, with_decoded=True)

322 i = getattr(self.dls, 'n_inp', -1)

323 inp = (inp,) if i==1 else tuplify(inp)

/opt/conda/lib/python3.7/site-packages/fastai/learner.py in get_preds(self, ds_idx, dl, with_input, with_decoded, with_loss, act, inner, reorder, cbs, **kwargs)

306 if with_loss: ctx_mgrs.append(self.loss_not_reduced())

307 with ContextManagers(ctx_mgrs):

--> 308 self._do_epoch_validate(dl=dl)

309 if act is None: act = getcallable(self.loss_func, 'activation')

310 res = cb.all_tensors()

/opt/conda/lib/python3.7/site-packages/fastai/learner.py in _do_epoch_validate(self, ds_idx, dl)

242 if dl is None: dl = self.dls[ds_idx]

243 self.dl = dl

--> 244 with torch.no_grad(): self._with_events(self.all_batches, 'validate', CancelValidException)

245

246 def _do_epoch(self):

/opt/conda/lib/python3.7/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

197

198 def _with_events(self, f, event_type, ex, final=noop):

--> 199 try: self(f'before_{event_type}'); f()

200 except ex: self(f'after_cancel_{event_type}')

201 self(f'after_{event_type}'); final()

/opt/conda/lib/python3.7/site-packages/fastai/learner.py in all_batches(self)

203 def all_batches(self):

204 self.n_iter = len(self.dl)

--> 205 for o in enumerate(self.dl): self.one_batch(*o)

206

207 def _backward(self): self.loss_grad.backward()

/opt/conda/lib/python3.7/site-packages/fastai/data/load.py in __iter__(self)

125 self.before_iter()

126 self.__idxs=self.get_idxs() # called in context of main process (not workers/subprocesses)

--> 127 for b in _loaders[self.fake_l.num_workers==0](self.fake_l):

128 # pin_memory causes tuples to be converted to lists, so convert them back to tuples

129 if self.pin_memory and type(b) == list: b = tuple(b)

/opt/conda/lib/python3.7/site-packages/torch/utils/data/dataloader.py in __next__(self)

519 if self._sampler_iter is None:

520 self._reset()

--> 521 data = self._next_data()

522 self._num_yielded += 1

523 if self._dataset_kind == _DatasetKind.Iterable and \

/opt/conda/lib/python3.7/site-packages/torch/utils/data/dataloader.py in _next_data(self)

559 def _next_data(self):

560 index = self._next_index() # may raise StopIteration

--> 561 data = self._dataset_fetcher.fetch(index) # may raise StopIteration

562 if self._pin_memory:

563 data = _utils.pin_memory.pin_memory(data)

/opt/conda/lib/python3.7/site-packages/torch/utils/data/_utils/fetch.py in fetch(self, possibly_batched_index)

32 raise StopIteration

33 else:

---> 34 data = next(self.dataset_iter)

35 return self.collate_fn(data)

36

/opt/conda/lib/python3.7/site-packages/fastai/data/load.py in create_batches(self, samps)

136 if self.dataset is not None: self.it = iter(self.dataset)

137 res = filter(lambda o:o is not None, map(self.do_item, samps))

--> 138 yield from map(self.do_batch, self.chunkify(res))

139

140 def new(self, dataset=None, cls=None, **kwargs):

/opt/conda/lib/python3.7/site-packages/fastcore/basics.py in chunked(it, chunk_sz, drop_last, n_chunks)

228 if not isinstance(it, Iterator): it = iter(it)

229 while True:

--> 230 res = list(itertools.islice(it, chunk_sz))

231 if res and (len(res)==chunk_sz or not drop_last): yield res

232 if len(res)<chunk_sz: return

/opt/conda/lib/python3.7/site-packages/fastai/data/load.py in do_item(self, s)

151 def prebatched(self): return self.bs is None

152 def do_item(self, s):

--> 153 try: return self.after_item(self.create_item(s))

154 except SkipItemException: return None

155 def chunkify(self, b): return b if self.prebatched else chunked(b, self.bs, self.drop_last)

/opt/conda/lib/python3.7/site-packages/fastai/data/load.py in create_item(self, s)

158 def retain(self, res, b): return retain_types(res, b[0] if is_listy(b) else b)

159 def create_item(self, s):

--> 160 if self.indexed: return self.dataset[s or 0]

161 elif s is None: return next(self.it)

162 else: raise IndexError("Cannot index an iterable dataset numerically - must use `None`.")

/opt/conda/lib/python3.7/site-packages/fastai/data/core.py in __getitem__(self, it)

456

457 def __getitem__(self, it):

--> 458 res = tuple([tl[it] for tl in self.tls])

459 return res if is_indexer(it) else list(zip(*res))

460

/opt/conda/lib/python3.7/site-packages/fastai/data/core.py in <listcomp>(.0)

456

457 def __getitem__(self, it):

--> 458 res = tuple([tl[it] for tl in self.tls])

459 return res if is_indexer(it) else list(zip(*res))

460

/opt/conda/lib/python3.7/site-packages/fastai/data/core.py in __getitem__(self, idx)

415 res = super().__getitem__(idx)

416 if self._after_item is None: return res

--> 417 return self._after_item(res) if is_indexer(idx) else res.map(self._after_item)

418

419 # %% ../../nbs/03_data.core.ipynb 53

/opt/conda/lib/python3.7/site-packages/fastai/data/core.py in _after_item(self, o)

375 raise

376 def subset(self, i): return self._new(self._get(self.splits[i]), split_idx=i)

--> 377 def _after_item(self, o): return self.tfms(o)

378 def __repr__(self): return f"{self.__class__.__name__}: {self.items}\ntfms - {self.tfms.fs}"

379 def __iter__(self): return (self[i] for i in range(len(self)))

/opt/conda/lib/python3.7/site-packages/fastcore/transform.py in __call__(self, o)

206 self.fs = self.fs.sorted(key='order')

207

--> 208 def __call__(self, o): return compose_tfms(o, tfms=self.fs, split_idx=self.split_idx)

209 def __repr__(self): return f"Pipeline: {' -> '.join([f.name for f in self.fs if f.name != 'noop'])}"

210 def __getitem__(self,i): return self.fs[i]

/opt/conda/lib/python3.7/site-packages/fastcore/transform.py in compose_tfms(x, tfms, is_enc, reverse, **kwargs)

156 for f in tfms:

157 if not is_enc: f = f.decode

--> 158 x = f(x, **kwargs)

159 return x

160

/opt/conda/lib/python3.7/site-packages/fastcore/transform.py in __call__(self, x, **kwargs)

79 @property

80 def name(self): return getattr(self, '_name', _get_name(self))

---> 81 def __call__(self, x, **kwargs): return self._call('encodes', x, **kwargs)

82 def decode (self, x, **kwargs): return self._call('decodes', x, **kwargs)

83 def __repr__(self): return f'{self.name}:\nencodes: {self.encodes}decodes: {self.decodes}'

/opt/conda/lib/python3.7/site-packages/fastcore/transform.py in _call(self, fn, x, split_idx, **kwargs)

89 def _call(self, fn, x, split_idx=None, **kwargs):

90 if split_idx!=self.split_idx and self.split_idx is not None: return x

---> 91 return self._do_call(getattr(self, fn), x, **kwargs)

92

93 def _do_call(self, f, x, **kwargs):

/opt/conda/lib/python3.7/site-packages/fastcore/transform.py in _do_call(self, f, x, **kwargs)

95 if f is None: return x

96 ret = f.returns(x) if hasattr(f,'returns') else None

---> 97 return retain_type(f(x, **kwargs), x, ret)

98 res = tuple(self._do_call(f, x_, **kwargs) for x_ in x)

99 return retain_type(res, x)

/opt/conda/lib/python3.7/site-packages/fastcore/dispatch.py in __call__(self, *args, **kwargs)

118 elif self.inst is not None: f = MethodType(f, self.inst)

119 elif self.owner is not None: f = MethodType(f, self.owner)

--> 120 return f(*args, **kwargs)

121

122 def __get__(self, inst, owner):

/opt/conda/lib/python3.7/site-packages/fastai/vision/core.py in create(cls, fn, **kwargs)

123 if isinstance(fn,bytes): fn = io.BytesIO(fn)

124 if isinstance(fn,Image.Image) and not isinstance(fn,cls): return cls(fn)

--> 125 return cls(load_image(fn, **merge(cls._open_args, kwargs)))

126

127 def show(self, ctx=None, **kwargs):

/opt/conda/lib/python3.7/site-packages/fastai/vision/core.py in load_image(fn, mode)

96 def load_image(fn, mode=None):

97 "Open and load a `PIL.Image` and convert to `mode`"

---> 98 im = Image.open(fn)

99 im.load()

100 im = im._new(im.im)

/opt/conda/lib/python3.7/site-packages/PIL/Image.py in open(fp, mode, formats)

2919 exclusive_fp = True

2920

-> 2921 prefix = fp.read(16)

2922

2923 preinit()

/opt/conda/lib/python3.7/site-packages/PIL/Image.py in __getattr__(self, name)

539 )

540 return self._category

--> 541 raise AttributeError(name)

542

543 @property

AttributeError: read

Edit: I was able to fix the second error. Thanks to @AllenK