Hey guys,

I started learning PyTorch recently. I am trying to implement the learning rate finder in PyTorch from scratch. I am not able to understand how it works. I am confused since it’s all interconnected (CLR, SGDR, CosineAnnealing).

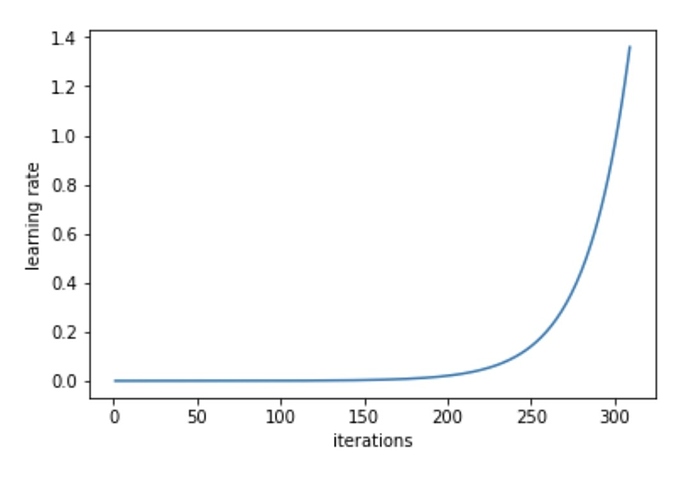

To understand it, I tried looking into fastai library but I got lost. In the documentation written for lr_find(), it says that it uses the technique mentioned in CLR paper. As far as I know, CLR uses a triangular learning rate scheduler. Then how do we get this plot of learning rate vs iterations when we use lr_find();

If it uses CLR, then the plot should be similar to that in paper (triangular plot).Also, the lr_find() has 2 parameters - start_lr and end_lr which has default value of 1e-5 and 10 respectively. But in above graph, the highest value of learning rate (on y-axis) is 1.4. Why ?

I think I am confused because all of this is so interconnected. Can anyone clear my confusion on this, regarding where CLR, SGDR, CosineAnnealing are exactly used ? And how does lr_find() use CLR to find optimal learning rate ?

Thanks