I’m trying to gain some intuition around learning rates, weight decay and training/validation losses. I tried training a Unet to segment some aerial images into classes like building, tree, car etc …

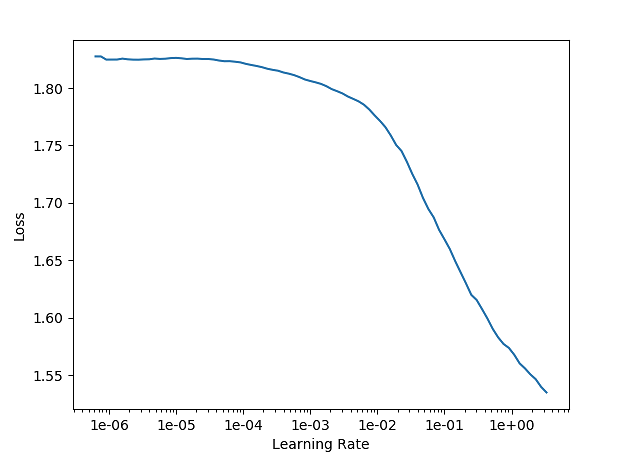

The learning rate graph looks like this:

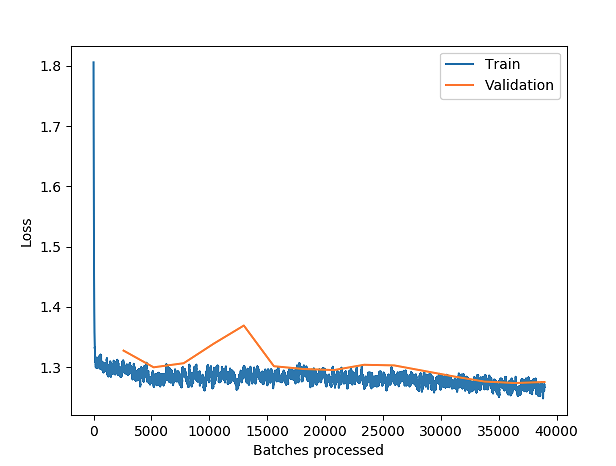

After which I set the learning rate to lr=1e-1. I used a wd=1e-2 and after fit_onecyle(15, lr=1e-1) with 15 epochs (batch size 16) the resulting training losses are:

This seems unusual. Could this be that the weight decay is too aggressive and forcing the model to be to “simple”. Could it be that the learning rate is to high that is just oscillates? Or an I missing something else?

My dataset is 52,000 aerial image chips each 256x256 @ 10cm per pixel GSD.