Hi,

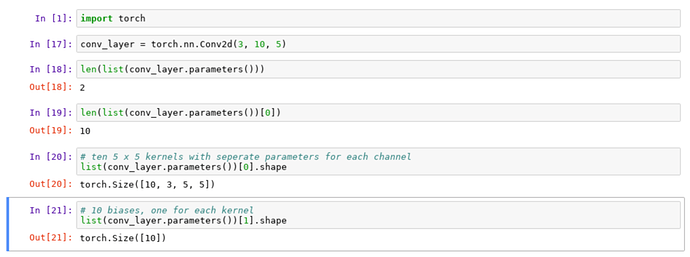

I am currently going through Part-1 old lectures. In Lesson 4 (video: https://youtu.be/V2h3IOBDvrA?t=252), convolution is explained in a spread sheet. Here, Jeremy explains that for multi-channeled input, filtering is not a 2D matrix but rather a tensor. But, in the VGG code & Convolution2D function documentation, I see it doesn’t take tensor but a normal 2D filter. Even when there are multiple channels for an image.

Below is my understanding that fits the convolution flow. I would like to know if it is correct. Or if I am missing something here:

A colored image (cat or a dog) will have its input size as 3x224x224. Here 224x224 is the pixel dimension. 3 is number of channels - red, green, blue.

When we pass this image to the a convolution layer - ‘Convolution2D(64, 3, 3, activation=‘relu’)’, we are applying 64 filters each of size 3x3 on each channel(?). So,

- filter1 will have 3x3 matrix run over red channel (which is of size 224x224) & we will get an output of 224x224 (assuming zero padding)

- Similarly, filter1 will also be run on blue & green channels to get 2 outputs of size 224x224 each.

- Is my understanding till here correct? If so, will the output of all 3 channels somehow be merged into a single 224x224 convolution? Because, when we apply 64 filters, the output for each colored input image is 64 convolutions according to documentation of Convolution2D.

- If above understanding is correct then, same process is repeated for filter2 to filter64 to get a total of 64 convolutions. Which would comply to the documentation.

Pls, let me know if this is how convolution works. And if not, what am I missing here?