I’ve been working through the fast 1.x notebooks in the examples folder of the repo. I’ve been able to successfully run and use all the notebooks excep the ULMFit one.

When I run

learn.fit_one_cycle(1, 2e-2, moms=(0.8,0.7), wd=0.1)

I encounter the error:

RuntimeError: "bernoulli_scalar_cpu_" not implemented for 'Half'

A Google search of this error returned 1 result (which is amazing by itself) from the PyTorch github repo. (https://github.com/pytorch/pytorch/issues/25946)

My interpretation is that this functionality is not available in PyTorch. So I’m a bit flummoxed as to how I can work around or resolve this issue. Has anyone encountered this error before? Is it going to be addressed by a fix in the fastai 1.x repo?

Thanks in advance,

Gav

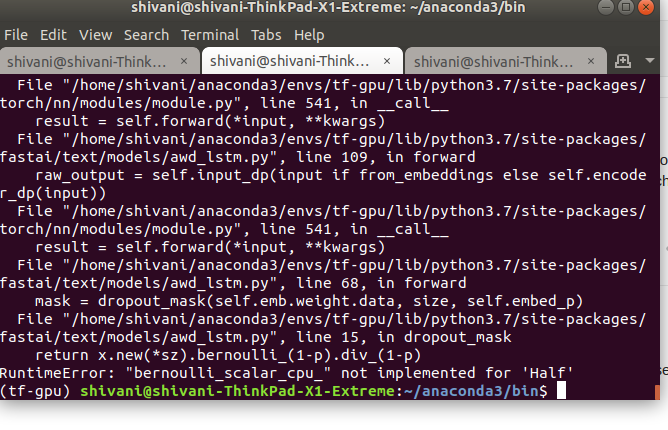

Screen dump of the full error message below

RuntimeError Traceback (most recent call last)

in

----> 1 learn.fit_one_cycle(1, 2e-2, moms=(0.8,0.7), wd=0.1)

~/anaconda3/envs/fastai/lib/python3.7/site-packages/fastai/train.py in fit_one_cycle(learn, cyc_len, max_lr, moms, div_factor, pct_start, final_div, wd, callbacks, tot_epochs, start_epoch)

20 callbacks.append(OneCycleScheduler(learn, max_lr, moms=moms, div_factor=div_factor, pct_start=pct_start,

21 final_div=final_div, tot_epochs=tot_epochs, start_epoch=start_epoch))

—> 22 learn.fit(cyc_len, max_lr, wd=wd, callbacks=callbacks)

23

24 def lr_find(learn:Learner, start_lr:Floats=1e-7, end_lr:Floats=10, num_it:int=100, stop_div:bool=True, wd:float=None):

~/anaconda3/envs/fastai/lib/python3.7/site-packages/fastai/basic_train.py in fit(self, epochs, lr, wd, callbacks)

200 callbacks = [cb(self) for cb in self.callback_fns + listify(defaults.extra_callback_fns)] + listify(callbacks)

201 self.cb_fns_registered = True

–> 202 fit(epochs, self, metrics=self.metrics, callbacks=self.callbacks+callbacks)

203

204 def create_opt(self, lr:Floats, wd:Floats=0.)->None:

~/anaconda3/envs/fastai/lib/python3.7/site-packages/fastai/basic_train.py in fit(epochs, learn, callbacks, metrics)

99 for xb,yb in progress_bar(learn.data.train_dl, parent=pbar):

100 xb, yb = cb_handler.on_batch_begin(xb, yb)

–> 101 loss = loss_batch(learn.model, xb, yb, learn.loss_func, learn.opt, cb_handler)

102 if cb_handler.on_batch_end(loss): break

103

~/anaconda3/envs/fastai/lib/python3.7/site-packages/fastai/basic_train.py in loss_batch(model, xb, yb, loss_func, opt, cb_handler)

24 if not is_listy(xb): xb = [xb]

25 if not is_listy(yb): yb = [yb]

—> 26 out = model(*xb)

27 out = cb_handler.on_loss_begin(out)

28

~/anaconda3/envs/fastai/lib/python3.7/site-packages/torch/nn/modules/module.py in call(self, *input, **kwargs)

545 result = self._slow_forward(*input, **kwargs)

546 else:

–> 547 result = self.forward(*input, **kwargs)

548 for hook in self._forward_hooks.values():

549 hook_result = hook(self, input, result)

~/anaconda3/envs/fastai/lib/python3.7/site-packages/torch/nn/modules/container.py in forward(self, input)

90 def forward(self, input):

91 for module in self._modules.values():

—> 92 input = module(input)

93 return input

94

~/anaconda3/envs/fastai/lib/python3.7/site-packages/torch/nn/modules/module.py in call(self, *input, **kwargs)

545 result = self._slow_forward(*input, **kwargs)

546 else:

–> 547 result = self.forward(*input, **kwargs)

548 for hook in self._forward_hooks.values():

549 hook_result = hook(self, input, result)

~/anaconda3/envs/fastai/lib/python3.7/site-packages/fastai/text/models/awd_lstm.py in forward(self, input, from_embeddings)

107 self.bs=bs

108 self.reset()

–> 109 raw_output = self.input_dp(input if from_embeddings else self.encoder_dp(input))

110 new_hidden,raw_outputs,outputs = [],[],[]

111 for l, (rnn,hid_dp) in enumerate(zip(self.rnns, self.hidden_dps)):

~/anaconda3/envs/fastai/lib/python3.7/site-packages/torch/nn/modules/module.py in call(self, *input, **kwargs)

545 result = self._slow_forward(*input, **kwargs)

546 else:

–> 547 result = self.forward(*input, **kwargs)

548 for hook in self._forward_hooks.values():

549 hook_result = hook(self, input, result)

~/anaconda3/envs/fastai/lib/python3.7/site-packages/fastai/text/models/awd_lstm.py in forward(self, words, scale)

66 if self.training and self.embed_p != 0:

67 size = (self.emb.weight.size(0),1)

—> 68 mask = dropout_mask(self.emb.weight.data, size, self.embed_p)

69 masked_embed = self.emb.weight * mask

70 else: masked_embed = self.emb.weight

~/anaconda3/envs/fastai/lib/python3.7/site-packages/fastai/text/models/awd_lstm.py in dropout_mask(x, sz, p)

13 def dropout_mask(x:Tensor, sz:Collection[int], p:float):

14 “Return a dropout mask of the same type as x, size sz, with probability p to cancel an element.”

—> 15 return x.new(*sz).bernoulli_(1-p).div_(1-p)

16

17 class RNNDropout(Module):

RuntimeError: “bernoulli_scalar_cpu_” not implemented for ‘Half’