Maybe “humdrum” is not the right word, but at least it rhymes.

Anyways, giving the interest in seq2seq and it’s inclusion in part 2 of this course, I’m making the following notebook available to all in anticipation of things to come in part 2:

seq2seq with attention via the DataBlock API

The notebook illustrates the following:

- How to use the DataBlock API to prepare your datasets for training

- How to implement “attention” in a custom PyTorch model

- How to examine the results of your seq2seq model

- How to visualize “attention”

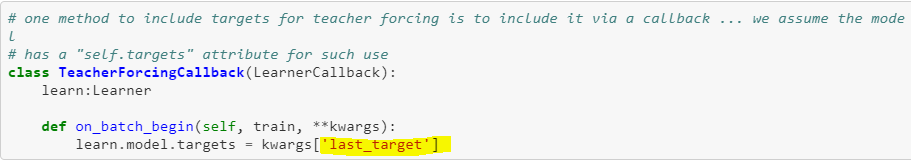

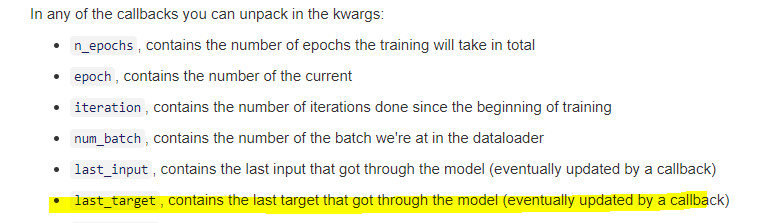

It builds heavily upon the 2018 part 2 course and includes a few other tricks I’ve learned along the way. For example, you’ll see the use of masks, PyTorch’s pad_packed_sequence/pack_padded_sequence functions, and a couple of ways to implement “teacher forcing”. You’ll also get a sense of how the DataBlock API can be used for any seq2seq task.

If you see something wrong or that can be improved, please submit a PR!

I’m also making my work available in hopes that folks out there on the forums can help make it better.

I’m sure there are all kinds of improvements that can be made and I wouldn’t be surprised if I’m doing a thing or two or ten wrong. And so … I’m gratefully accepting PRs to this repo and would welcome anyone that would be interested in adding other notebooks demonstrating other ways and best practices for tackling seq2seq problems using PyTorch and fast.ai.