I was wondering if anyone had experimented with test-time augmentation for image segmentation? I was working on something last night and it struck me that it’s totally possible to do, for example, flips on your inputs for segmentation, as long as you make sure to flip the predictions back. Could have similar benefits that you see with classification

Looks like there’s some discussion of TTA in the competition I was working on: https://www.kaggle.com/c/tgs-salt-identification-challenge/discussion/63974#375375

Hi @wdhorton

Have you tried TTA? It’s not entirely clear to me what is being returned from learn.TTA().

- Is only one augmentation applied at a time?

- Are the augmentation parameters stored anywhere so we can reverse the augmentation for an image segmentation challenge?

Thanks,

Mark

Yup it’s possible, and @sgugger has been thinking about this recently. It’s not supported in fastai but you can create your own transforms. (Some things like cropping may be impossible to support.)

Indeed I’d very much like fastai_v1 to be the first library to fully implement TTA with segmentation, points bounding boxes etc. Though let’s first finish the basic functionalities

Thanks for the replies and appreciate it’s full steam ahead on the dev build. However I’m more than a little confused as to what learn.TTA() is returning (I’ve browsed the forums but am no clearer). For example, when I get an array tta_preds with dims [5, 400, 128,128] back I understand I have 5 predictions for 400 images.

However for each transform returned I’m struggling to decipher what TTA was applied. For what it’s worth, if I could identify the lr flip one that would be great. Annoyingly, (unless I’m an idiot, which cannot be ruled out) this doesn’t seem as simple as plotting. Often within the same TTA transform some images are flipped and some are not.

This seems odd, I was expecting/hoping that, say, in the return from learn.TTA() [1, :, :, :] would correspond to lr flips all the time.

I hope this makes sense and apologies in advance if I’ve missed something really obvious.

Mark

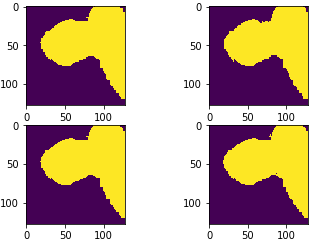

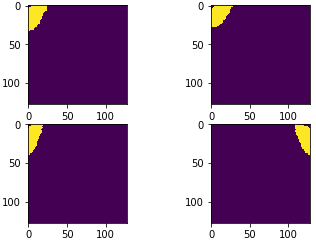

All the same way:

Some flipped:

TTA is always random in the current fastai version. I don’t think it’s possible to do what you want without rewriting that bit from scratch, plus writing new transform code.

I am using a hacky way by changing the dataset which has transformed images (horizontal flip ).For example:

md.test_dl.dataset = TestFilesFlippedDataset(tst_x,tst_x,tfms[1],PATH)

Thank you for clarification.

Would be nice if Image.apply_tfms could be reversed, at least for the affine part, i.e. with no cropping. This way we could easily implement TTA in segmentation tasks.

This is an older thread but for those looking to implement TTA with a segmentation model I have just published a blog post going over the topic. Hopefully someone will find it useful ![]()

Improving segmentation model accuracy with Test Time Augmentation

Very nicely done!