Hi everyone!

I’d like to start a discussion here (and maybe find some collaborators!) on the topic of video enhancement / super-resolution, and in particular how transfer learning can be applied there. After searching a bit for papers and code, it feels like not a lot of people have been exploring this direction.

In order to get started on the topic, I made an experiment which I think shows interesting first results.

The idea is that since a video is a small dataset, if we start with a good image enhancement model (in my case fastai lesson 7 model as a basis), and fine-tune it on the images of the video, the model can hopefully learn specific details of the scene when the camera gets closer and then use reintegrate them back when the camera is further away.

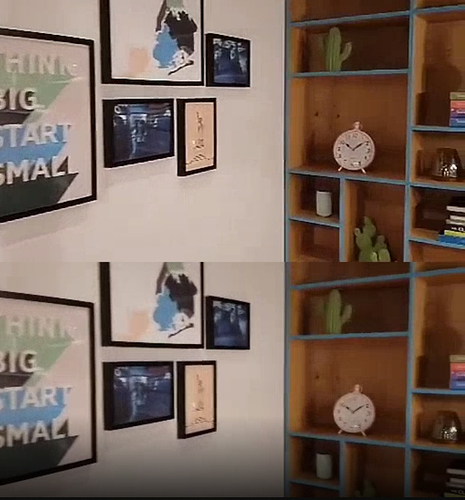

Here is a screenshot of the first results I got (more screenshots and a video can be found on my github repository):

I’m a bit hesitant regarding what to try next, so I’d be glad to have some opinions. In particular:

- Do you think using an RNN would give significantly better results?

- How impactful would it be to use a ResNet50 instead of 34, and use a pre-trained superres model from ImageNet instead of the pets dataset?

- Can overfitting be actually beneficial in this specific case, since the test set IS the training set?

Thanks!

Sebastien