I’ve recently started experimenting with fast.ai. I was shocked to find that in all experiments I conducted, it significantly outperformed my Tensorflow (2.0) models, despite using the same model architectures, optimizers and loss functions.

As in tensorflow you don’t get features such as 1-cycle policy, weight decay or the fancy fast.ai data transformations out of the box - I disabled all of these features to get a fairer comparison, but still fast.ai performs better, no matter what I do.

Here the results from an experiment I conducted today:

Configuration:

- Dataset: CIFAR-10

- Model: Resnet50 (pretrained on ImageNet)

- Optimizer: Adam (learning-rate=0.003)

- Batch-size: 64

- No weight decay

- No data transformations

- No 1-cycle policy

Here my fast.ai code:

data = ImageDataBunch.from_folder(data_path, train="train", valid="test", ds_tfms=None, size=64, num_workers=0).normalize(imagenet_stats)

learn = cnn_learner(data, models.resnet50, metrics=[accuracy], true_wd=False)

learn.fit(3)

And here my Tensorflow model:

base_model = keras.applications.resnet_v2.ResNet50V2(weights="imagenet", include_top=False)

for layer in base_model.layers:

layer.trainable = False

avg = keras.layers.GlobalAveragePooling2D()(base_model.output)

mx = keras.layers.GlobalMaxPooling2D()(base_model.output)

out = tf.keras.layers.Concatenate()([avg, mx])

out = keras.layers.BatchNormalization()(out)

out = keras.layers.Dropout(0.25)(out)

out = keras.layers.Dense(512, activation="relu")(out)

out = keras.layers.BatchNormalization()(out)

out = keras.layers.Dropout(0.25)(out)

out = keras.layers.Dense(nr_classes, activation="softmax")(out)

model = keras.models.Model(inputs=base_model.input, outputs=out)

optimizer = tf.keras.optimizers.Adam(lr=0.003)

model.compile(loss="sparse_categorical_crossentropy", optimizer=optimizer, metrics=["accuracy"])

I’ve designed the output layers that are added after the freezed CNN layers to be identical to the ones that fast.ai uses (although I wasn’t sure about the Dropout rate of fast.ai, I ended up using 0.25)

Results (only training the output layers, without unfreeze() !):

- fast.ai: 85% after one epoch, 89% after 3 epochs

- Tensorflow: 81% after one epoch, 80% after 3 epochs

Questions:

-

Why are the results in Tensorflow so much worse? Is it just that the pretrained weights of fast.ai for resnet50 are better, or am I missing some of the magic that fast.ai uses?

-

In Tensorflow, I reshaped the images from 32x32 to 224x224, which is the image size that was used for pretraining the resnet50 model with imagenet. When I used the 32x32 images directly for training, I got really bad results (~10% accuracy). In fast.ai, I don’t perform any resizing, and it just works. Does fast.ai resize the images by default (I don’t think that it does…)? Was the fast.ai resnet model trained with different image resolutions, or is there another trick being used?

Here are the notebooks I used for this experiment:

https://colab.research.google.com/drive/1n8RJf2CmHISRxI5XuWfXGrrTyjNtOi7I

https://colab.research.google.com/drive/11jt_ZBpVN__zYdoNSbw83NU3shpGjS5x

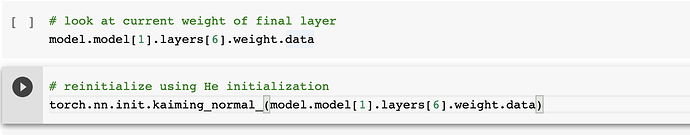

Fastai will by default create a head with a dropout of 0 instead of 0.25 like you specify for your Dropout in Keras (also learn.summary showed zero as well)

Fastai will by default create a head with a dropout of 0 instead of 0.25 like you specify for your Dropout in Keras (also learn.summary showed zero as well)