Hi all,

I am currently looking at language modelling using transformers and I encounter several problems.

One of them is that when I use the default Transformer (pretrained=False) with a LanguageLearner the accuracy does not change whatever hyperparameters I train with (I changed batch-size, learning-rate, momentum).

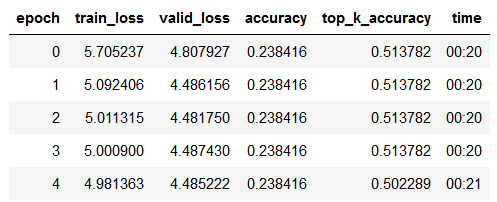

For experimenting purposes I have started training on 200 articles from the german wikipedia. The loss starts at around 5.7 and goes down to 4.9 over four epochs, the accuracy, however, stays constant at around 0.23.

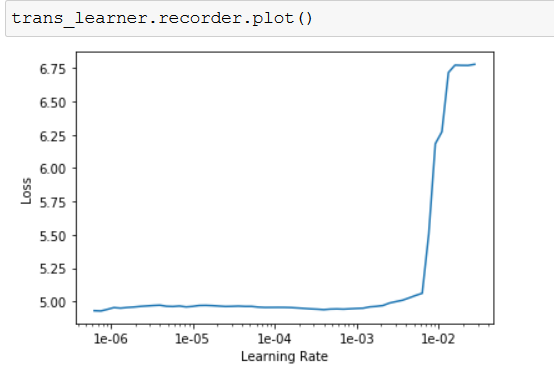

More confusingly, after training for several epochs, the learning-rate finder shows an ascending curve.

This is my data-initialization:

sample_trans_data_bunch = (TextList.from_folder(samples_path)

.split_by_rand_pct(0.1, 42)

.label_for_lm()

.databunch(bs=bs, num_workers=2))

This is my model initialization:

metrics = [accuracy, top_k_accuracy] trans_learner = language_model_learner(sample_trans_data_bunch, Transformer, pretrained=False, metrics=metrics).to_fp16()

This is my training stats:

This is the result of lr-find after doing the above training:

What could I be doing wrong? Have I just not found the right hyperparameters? Also: should I choose a specific tokenizer for transformers?

I’m new to fast.ai and I would really appreciate some advice!

Thanks a lot in advance,

David