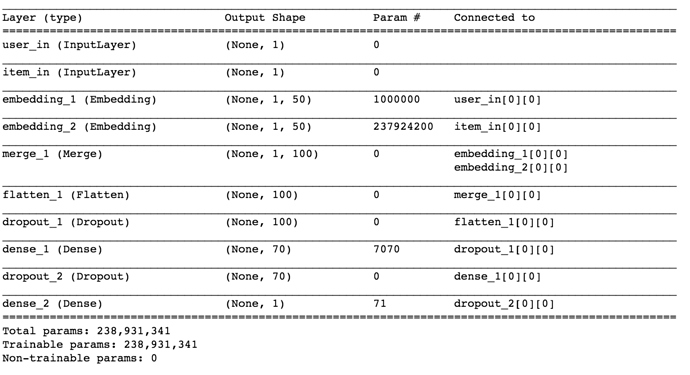

I use a network like below to train my own recommender system.

my hardware is GTX1070

and the estimate training time is 30000+ second… why so long? is it reasonable?

It seems i have find the problem, I set batch size from 32 to 256… and the training time become short~

How short? You can’t just leave us hanging like that!

It depends, when you find training takes too long, you can change your batch size, I train two embedding 20000100 4700000100with batch size 5012,it takes me about half an hour