I’ve got a rather timely project request if anyone happens to have time in the next 24 hours or so - might be suitable for someone like @sgugger who is already familiar with fastai.sgdr.

The project is to refactor and extend the sgdr/clr stuff into a new class called “OptimScheduler” so that you can, in the most general version, pass a list to each param in the constructor, where each list is equal to the number of “epoch groups”. And the params are basically everything that you could want to change in an optimizer. This is easiest to explain with examples I think!

For instance say you want:

- 10 epochs of rmsprop, lr linearly increasing from lr=0.1 to lr=1.0, and constant weight decay of 1e-3, and linearly decreasing momentum from 0.95 to 0.85, then:

- 20 epochs of adam, lr decreasing from 0.5 to 0.1 with cosine annealing, and constant weight decay of 1e04, and beta equal to (0.9,0.99), you’d pass:

[epochs = (10,20), opt_fn=(optim.rmsprop,optim.adam),

lr=((0.1,1.0), (0.5,0.1)), lr_decay=(decay.linear,decay.cosine),

momentum=((0.95,0.85), 0.9), momentum_decay=(decay.linear, None),

beta2=(None,0.99)]

Note that I’ve mapped beta1 for adam to momentum since that’s really what it is, and it simplifies the API. Then beta2 of course maps to the 2nd param of adam’s beta. Stuff that’s not relevant is just None (eg decay, if there’s no decay wanted in that epoch group).

So then with this you should be able to implement SGDR, CLR, and 1cycle all by simply specifying appropriate parameter lists.

The reason I’m keen to have this ASAP is for imagenet training, where the best approaches tend to suggest using rmsprop with linear annealing for a bit, then nesterov momentum sgd with a stepped decay schedule for the rest. It’s awkward to do this with our current approach, and it would be nice to be able to experiment easily with extensions of this.

An extra step which would be really helpful: for each epoch group, also be able to specify a different ModelData object. That way we can call set_data at the start of each group to gradually increase the size of images being used. Now of course set_data is part of Learner, and we don’t model.fit to have to know about fastai.learner, so probably the best way to do this is add an on_epochgroup_start callback to OptimScheduler where we can then do whatever stuff we like.

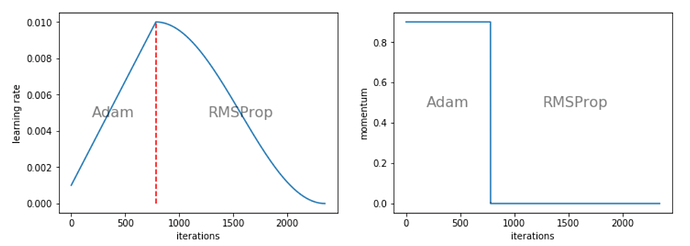

A suggestion: create a plot_all method that plots everything that’s changing (and for stuff like opt_fn it could just print a list or something, or even put them as overlays on the chart). Would be helpful for debugging.

Anyway, if anyone thinks that sounds interesting and wants to give it a go, let us know in this thread .