Getting the following error when I try using the nn tabular learner:

RuntimeError: Found dtype Char but expected Float

It’s happening both when I try lr_find or fit_one_cycle. Have a minimal reproduction of the error below

import pandas as pd

from fastai.tabular.all import *

data = {

'user_id': {0: 32, 1: 100, 2: 122, 3: 156, 4: 152, 5: 166, 6: 155, 7: 308, 8: 330, 9: 523},

'other_user_id': {0: 60, 1: 60, 2: 60, 3: 60, 4: 60, 5: 60, 6: 60, 7: 60, 8: 60, 9: 60},

'type': {0: 0, 1: 1, 2: 0, 3: 1, 4: 0, 5: 1, 6: 0, 7: 0, 8: 0, 9: 0}

}

df = pd.DataFrame(data)

dep_var = "type"

cont,cat = cont_cat_split(df, max_card=7000, dep_var=dep_var)

splits = [[0, 1, 3, 4, 5, 6, 7], [2, 8, 9]]

procs = [Categorify, FillMissing, Normalize]

to = TabularPandas(df, procs, cat, cont, splits=splits, y_names=dep_var)

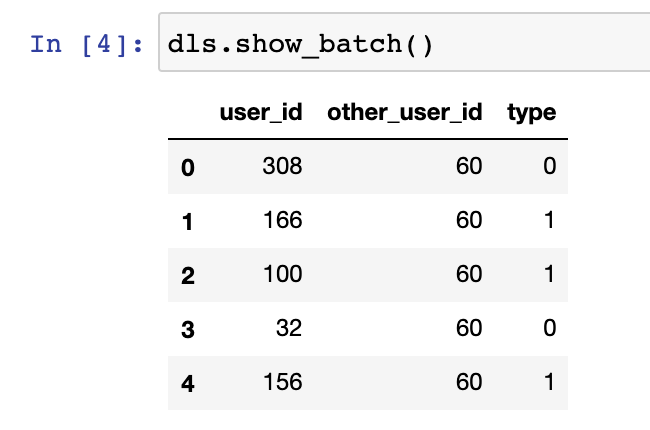

dls = to.dataloaders(5)

learn = tabular_learner(dls, y_range=(-0.2,1.2), layers=[500,250],

n_out=1, loss_func=F.mse_loss)

learn.lr_find() # or `learn.fit_one_cycle(5, 1e-2)`

And the error it throws:

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-3-1d9d22177d5b> in <module>

21 n_out=1, loss_func=F.mse_loss)

22

---> 23 learn.lr_find() # or `learn.fit_one_cycle(5, 1e-2)`

/usr/local/lib/python3.8/site-packages/fastai/callback/schedule.py in lr_find(self, start_lr, end_lr, num_it, stop_div, show_plot, suggestions)

226 n_epoch = num_it//len(self.dls.train) + 1

227 cb=LRFinder(start_lr=start_lr, end_lr=end_lr, num_it=num_it, stop_div=stop_div)

--> 228 with self.no_logging(): self.fit(n_epoch, cbs=cb)

229 if show_plot: self.recorder.plot_lr_find()

230 if suggestions:

/usr/local/lib/python3.8/site-packages/fastcore/utils.py in _f(*args, **kwargs)

452 init_args.update(log)

453 setattr(inst, 'init_args', init_args)

--> 454 return inst if to_return else f(*args, **kwargs)

455 return _f

456

/usr/local/lib/python3.8/site-packages/fastai/learner.py in fit(self, n_epoch, lr, wd, cbs, reset_opt)

202 self.opt.set_hypers(lr=self.lr if lr is None else lr)

203 self.n_epoch,self.loss = n_epoch,tensor(0.)

--> 204 self._with_events(self._do_fit, 'fit', CancelFitException, self._end_cleanup)

205

206 def _end_cleanup(self): self.dl,self.xb,self.yb,self.pred,self.loss = None,(None,),(None,),None,None

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _do_fit(self)

192 for epoch in range(self.n_epoch):

193 self.epoch=epoch

--> 194 self._with_events(self._do_epoch, 'epoch', CancelEpochException)

195

196 @log_args(but='cbs')

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _do_epoch(self)

186

187 def _do_epoch(self):

--> 188 self._do_epoch_train()

189 self._do_epoch_validate()

190

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _do_epoch_train(self)

178 def _do_epoch_train(self):

179 self.dl = self.dls.train

--> 180 self._with_events(self.all_batches, 'train', CancelTrainException)

181

182 def _do_epoch_validate(self, ds_idx=1, dl=None):

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/usr/local/lib/python3.8/site-packages/fastai/learner.py in all_batches(self)

159 def all_batches(self):

160 self.n_iter = len(self.dl)

--> 161 for o in enumerate(self.dl): self.one_batch(*o)

162

163 def _do_one_batch(self):

/usr/local/lib/python3.8/site-packages/fastai/learner.py in one_batch(self, i, b)

174 self.iter = i

175 self._split(b)

--> 176 self._with_events(self._do_one_batch, 'batch', CancelBatchException)

177

178 def _do_epoch_train(self):

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _do_one_batch(self)

167 if not self.training: return

168 self('before_backward')

--> 169 self._backward(); self('after_backward')

170 self._step(); self('after_step')

171 self.opt.zero_grad()

/usr/local/lib/python3.8/site-packages/fastai/learner.py in _backward(self)

150

151 def _step(self): self.opt.step()

--> 152 def _backward(self): self.loss.backward()

153

154 def _with_events(self, f, event_type, ex, final=noop):

/usr/local/lib/python3.8/site-packages/torch/tensor.py in backward(self, gradient, retain_graph, create_graph)

183 products. Defaults to ``False``.

184 """

--> 185 torch.autograd.backward(self, gradient, retain_graph, create_graph)

186

187 def register_hook(self, hook):

/usr/local/lib/python3.8/site-packages/torch/autograd/__init__.py in backward(tensors, grad_tensors, retain_graph, create_graph, grad_variables)

123 retain_graph = create_graph

124

--> 125 Variable._execution_engine.run_backward(

126 tensors, grad_tensors, retain_graph, create_graph,

127 allow_unreachable=True) # allow_unreachable flag

RuntimeError: Found dtype Char but expected Float

Exception raised from compute_types at ../aten/src/ATen/native/TensorIterator.cpp:183 (most recent call first):

frame #0: c10::Error::Error(c10::SourceLocation, std::__1::basic_string<char, std::__1::char_traits<char>, std::__1::allocator<char> >) + 169 (0x12ce2f7f9 in libc10.dylib)

frame #1: at::TensorIterator::compute_types(at::TensorIteratorConfig const&) + 3842 (0x11f772372 in libtorch_cpu.dylib)

frame #2: at::TensorIterator::build(at::TensorIteratorConfig&) + 618 (0x11f77b57a in libtorch_cpu.dylib)

frame #3: at::TensorIterator::TensorIterator(at::TensorIteratorConfig&) + 223 (0x11f77b25f in libtorch_cpu.dylib)

frame #4: at::native::mse_loss_backward_out(at::Tensor&, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 410 (0x11f5c6fda in libtorch_cpu.dylib)

frame #5: at::CPUType::mse_loss_backward_out_grad_input(at::Tensor&, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 9 (0x11f9e5049 in libtorch_cpu.dylib)

frame #6: at::mse_loss_backward_out(at::Tensor&, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 157 (0x11fa984ad in libtorch_cpu.dylib)

frame #7: at::native::mse_loss_backward(at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 118 (0x11f5c6d56 in libtorch_cpu.dylib)

frame #8: at::CPUType::mse_loss_backward(at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 14 (0x11f9e505e in libtorch_cpu.dylib)

frame #9: c10::impl::wrap_kernel_functor_unboxed_<c10::impl::detail::WrapFunctionIntoRuntimeFunctor_<at::Tensor (*)(at::Tensor const&, at::Tensor const&, at::Tensor const&, long long), at::Tensor, c10::guts::typelist::typelist<at::Tensor const&, at::Tensor const&, at::Tensor const&, long long> >, at::Tensor (at::Tensor const&, at::Tensor const&, at::Tensor const&, long long)>::call(c10::OperatorKernel*, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 27 (0x11f3968db in libtorch_cpu.dylib)

frame #10: at::Tensor c10::Dispatcher::call<at::Tensor, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long>(c10::TypedOperatorHandle<at::Tensor (at::Tensor const&, at::Tensor const&, at::Tensor const&, long long)> const&, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) const + 287 (0x11fac719f in libtorch_cpu.dylib)

frame #11: at::mse_loss_backward(at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 157 (0x11fa9857d in libtorch_cpu.dylib)

frame #12: torch::autograd::VariableType::mse_loss_backward(at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 900 (0x121a6bba4 in libtorch_cpu.dylib)

frame #13: c10::impl::wrap_kernel_functor_unboxed_<c10::impl::detail::WrapFunctionIntoRuntimeFunctor_<at::Tensor (*)(at::Tensor const&, at::Tensor const&, at::Tensor const&, long long), at::Tensor, c10::guts::typelist::typelist<at::Tensor const&, at::Tensor const&, at::Tensor const&, long long> >, at::Tensor (at::Tensor const&, at::Tensor const&, at::Tensor const&, long long)>::call(c10::OperatorKernel*, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 27 (0x11f3968db in libtorch_cpu.dylib)

frame #14: at::Tensor c10::Dispatcher::call<at::Tensor, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long>(c10::TypedOperatorHandle<at::Tensor (at::Tensor const&, at::Tensor const&, at::Tensor const&, long long)> const&, at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) const + 287 (0x11fac719f in libtorch_cpu.dylib)

frame #15: at::mse_loss_backward(at::Tensor const&, at::Tensor const&, at::Tensor const&, long long) + 157 (0x11fa9857d in libtorch_cpu.dylib)

frame #16: torch::autograd::generated::MseLossBackward::apply(std::__1::vector<at::Tensor, std::__1::allocator<at::Tensor> >&&) + 298 (0x1218f6f7a in libtorch_cpu.dylib)

frame #17: torch::autograd::Node::operator()(std::__1::vector<at::Tensor, std::__1::allocator<at::Tensor> >&&) + 742 (0x1220507e6 in libtorch_cpu.dylib)

frame #18: torch::autograd::Engine::evaluate_function(std::__1::shared_ptr<torch::autograd::GraphTask>&, torch::autograd::Node*, torch::autograd::InputBuffer&, std::__1::shared_ptr<torch::autograd::ReadyQueue> const&) + 1846 (0x1220458a6 in libtorch_cpu.dylib)

frame #19: torch::autograd::Engine::thread_main(std::__1::shared_ptr<torch::autograd::GraphTask> const&) + 764 (0x12204481c in libtorch_cpu.dylib)

frame #20: torch::autograd::Engine::execute_with_graph_task(std::__1::shared_ptr<torch::autograd::GraphTask> const&, std::__1::shared_ptr<torch::autograd::Node>) + 1023 (0x12204e87f in libtorch_cpu.dylib)

frame #21: torch::autograd::python::PythonEngine::execute_with_graph_task(std::__1::shared_ptr<torch::autograd::GraphTask> const&, std::__1::shared_ptr<torch::autograd::Node>) + 53 (0x11e4b7345 in libtorch_python.dylib)

frame #22: torch::autograd::Engine::execute(std::__1::vector<torch::autograd::Edge, std::__1::allocator<torch::autograd::Edge> > const&, std::__1::vector<at::Tensor, std::__1::allocator<at::Tensor> > const&, bool, bool, std::__1::vector<torch::autograd::Edge, std::__1::allocator<torch::autograd::Edge> > const&) + 662 (0x12204cee6 in libtorch_cpu.dylib)

frame #23: torch::autograd::python::PythonEngine::execute(std::__1::vector<torch::autograd::Edge, std::__1::allocator<torch::autograd::Edge> > const&, std::__1::vector<at::Tensor, std::__1::allocator<at::Tensor> > const&, bool, bool, std::__1::vector<torch::autograd::Edge, std::__1::allocator<torch::autograd::Edge> > const&) + 82 (0x11e4b7142 in libtorch_python.dylib)

frame #24: THPEngine_run_backward(THPEngine*, _object*, _object*) + 2174 (0x11e4b7cee in libtorch_python.dylib)

frame #25: cfunction_call_varargs + 171 (0x10c30e0f6 in Python)

frame #26: _PyObject_MakeTpCall + 274 (0x10c30dbe5 in Python)

frame #27: call_function + 804 (0x10c3ae572 in Python)

frame #28: _PyEval_EvalFrameDefault + 30479 (0x10c3ab11f in Python)

frame #29: _PyEval_EvalCodeWithName + 1958 (0x10c3aeed7 in Python)

frame #30: _PyFunction_Vectorcall + 228 (0x10c30e590 in Python)

frame #31: call_function + 346 (0x10c3ae3a8 in Python)

frame #32: _PyEval_EvalFrameDefault + 30081 (0x10c3aaf91 in Python)

frame #33: _PyEval_EvalCodeWithName + 1958 (0x10c3aeed7 in Python)

frame #34: _PyFunction_Vectorcall + 228 (0x10c30e590 in Python)

frame #35: call_function + 346 (0x10c3ae3a8 in Python)

frame #36: _PyEval_EvalFrameDefault + 30053 (0x10c3aaf75 in Python)

frame #37: function_code_fastcall + 106 (0x10c30e42a in Python)

frame #38: call_function + 346 (0x10c3ae3a8 in Python)

frame #39: _PyEval_EvalFrameDefault + 30053 (0x10c3aaf75 in Python)

frame #40: function_code_fastcall + 106 (0x10c30e42a in Python)

frame #41: method_vectorcall + 135 (0x10c3104db in Python)

frame #42: call_function + 346 (0x10c3ae3a8 in Python)

frame #43: _PyEval_EvalFrameDefault + 30273 (0x10c3ab051 in Python)

frame #44: _PyEval_EvalCodeWithName + 1958 (0x10c3aeed7 in Python)

frame #45: _PyFunction_Vectorcall + 228 (0x10c30e590 in Python)

frame #46: call_function + 346 (0x10c3ae3a8 in Python)

frame #47: _PyEval_EvalFrameDefault + 30053 (0x10c3aaf75 in Python)

frame #48: function_code_fastcall + 106 (0x10c30e42a in Python)

frame #49: method_vectorcall + 372 (0x10c3105c8 in Python)

frame #50: PyVectorcall_Call + 108 (0x10c30de71 in Python)

frame #51: _PyEval_EvalFrameDefault + 31000 (0x10c3ab328 in Python)

frame #52: function_code_fastcall + 106 (0x10c30e42a in Python)

frame #53: method_vectorcall + 135 (0x10c3104db in Python)

frame #54: call_function + 346 (0x10c3ae3a8 in Python)

frame #55: _PyEval_EvalFrameDefault + 30273 (0x10c3ab051 in Python)

frame #56: _PyEval_EvalCodeWithName + 1958 (0x10c3aeed7 in Python)

frame #57: _PyFunction_Vectorcall + 228 (0x10c30e590 in Python)

frame #58: call_function + 346 (0x10c3ae3a8 in Python)

frame #59: _PyEval_EvalFrameDefault + 30053 (0x10c3aaf75 in Python)

frame #60: function_code_fastcall + 106 (0x10c30e42a in Python)

frame #61: call_function + 346 (0x10c3ae3a8 in Python)

frame #62: _PyEval_EvalFrameDefault + 30053 (0x10c3aaf75 in Python)

frame #63: function_code_fastcall + 106 (0x10c30e42a in Python)