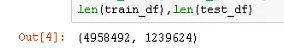

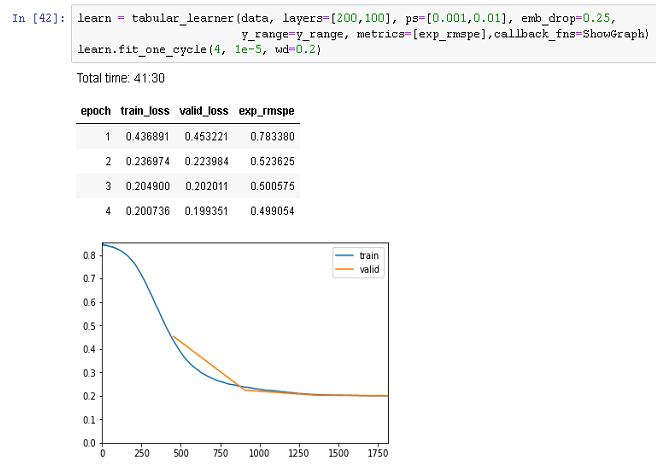

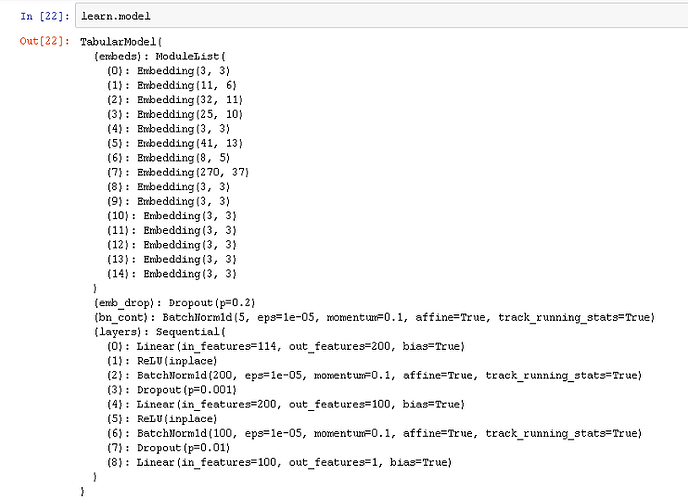

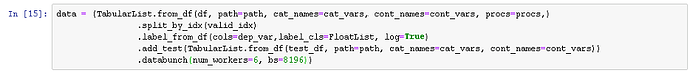

After many experiments manipulating Embedding Dropout vs Learning rate, I have come to this pattern which has the validation loss at a sharp angle to the training loss at one point during the learning. What I am curious about is whether or not there is a way (which parameters) to further bend the validation curve to the training curve(drop out in the model is actually .25):