Hi! Recently researchers from Google published a paper about new activation function they call Swish.

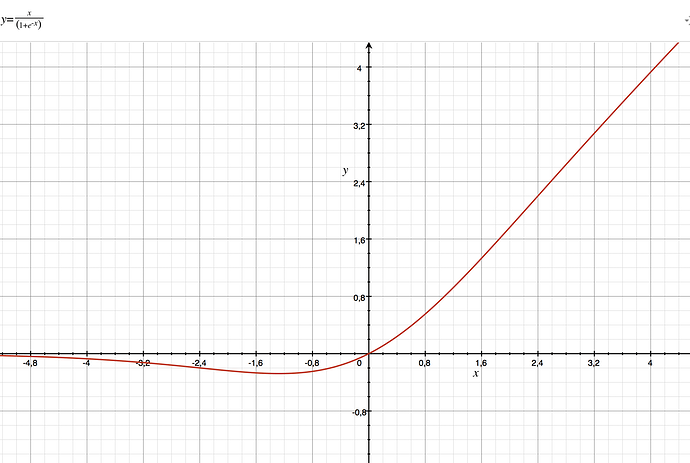

The formula is simply sigmoid(x) * x. The paper shows that it matches or outperforms ReLU activation function in nearly every experiment. Paper.

Question is - how it will affect performance? ‘relu’ is much simpler in implementation, and although ‘swish’ is also simple, but it still require exponent function

It also looks like inverted low pass filter with resonance. http://media.soundonsound.com/sos/oct99/images/synth13_14.gif

Ha! Yeah, looks like 6db slope, too

I only skimmed the paper but it seems they never tested it without batch norm, correct?

Here’s the quote from the paper

The success of Swish implies that the gradient preserving

property of ReLU (i.e., having a derivative of 1 when x > 0) may no longer be a distinct advantage

in modern architectures. In fact, we show in the experimental section that we can train deeper Swish

networks than ReLU networks when using BatchNorm (Ioffe & Szegedy, 2015).