Hello all,

- This is my first post, thanks for this amazing course and community!

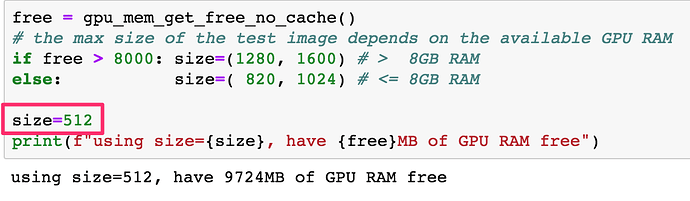

I’m trying to set up the “SuperRes” example from the course with my own image crappifier.

The concept is to create a model that can turn a pencil sketch into a real photo

So something like this would become an actual cat image:

I found a small code snippet that does this kind of image -> pencil effect and ran it on the images.

The training seems to have worked really well, and the generated images during training are surprisingly accurate:

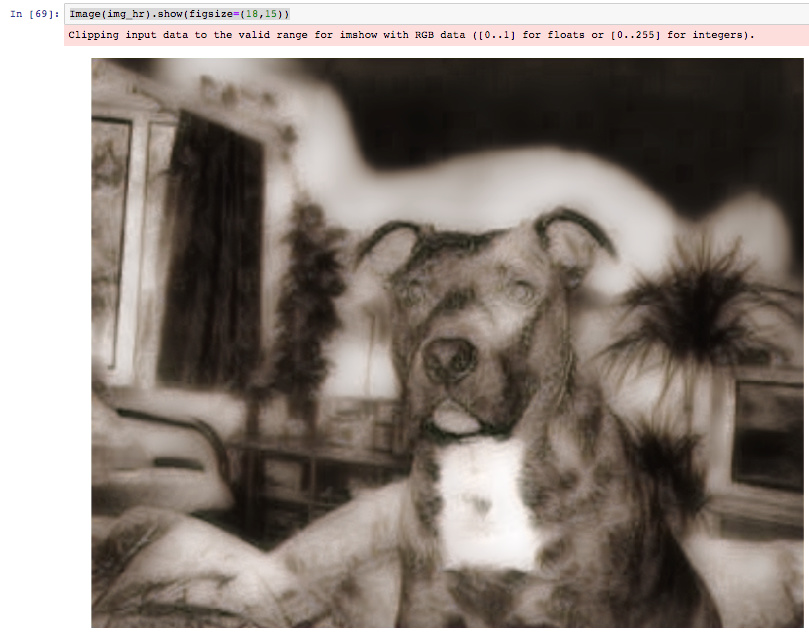

When I tried to test the results with the same code from the example notebook, I am getting a distorted view of some kind.

My assumption is that the setting of the model are not getting propagated, but I couldn’t find a solution.

Would appreciate your help.

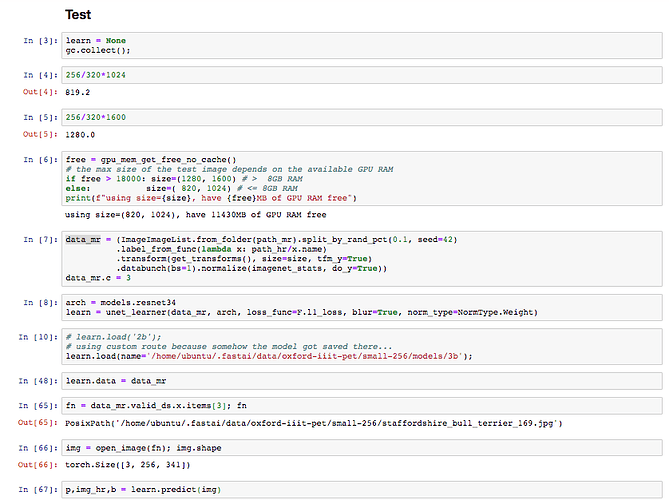

Same code as example

Same dog that looked nice during training:

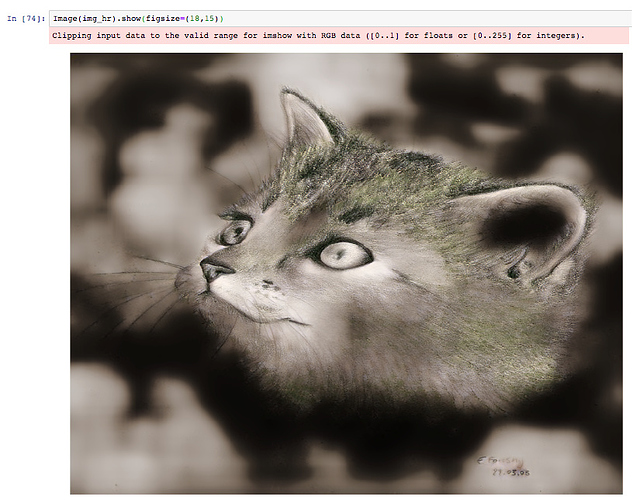

When trying on an out-of-dataset image, it also looks bad: