Are you guys messing with your machines or doing this in a container ?

Btw at official nvidia cuda repository already has the nvidia-headless-410

Has anybody tried those, yet? In particular can you run nvidia-settings on those to configure things like coolbits without needing to temporarily setup a real xserver, running it to configure the card and then shutting it down?

Thanks.

This looks interesting: Lambda Stack: an AI software stack that's always up-to-date

What is Lambda Stack?

Lambda Stack provides an easy way to install popular Machine Learning frameworks. With Lambda Stack, you can use apt / aptitude to install TensorFlow, Keras, PyTorch, Caffe, Caffe 2, Theano, CUDA, cuDNN, and NVIDIA GPU drivers. If a new version of any framework is released, Lambda Stack manages the upgrade. You’ll never run into issues with your NVIDIA drivers again.

Lambda Stack is both a system wide package, a Dockerfile, and a Docker image.

No need to compromise. Lambda Stack is not only a system wide installation of all of your favorite frameworks and drivers but also a convenient “everything included” deep learning Docker image. Now you’ll have your team up and running with GPU-accelerated Docker images in minutes instead of weeks. To learn more about how to set up Lambda Stack GPU Dockerfiles check out our tutorial:

https://lambdalabs.com/blog/set-up-a-tensorflow-gpu-docker-container-using-lambda-stack-dockerfile/

…

Hi, I also have a working setup, which seems to differ from the methods shown here, so for completeness here it is (just posted this as an answer in another thread, then thought this might be worth a topic on its own, just to find it existed already;-) .This uses no runfiles or compiliation but works with everything “out of the box” / apt packages etc. so maybe easier for linux-inexperienced folks.

Tested with nvidia-driver-418 :

This one seems to work really well for me too…! No activity on my NVIDIA GPU for display, PyTorch variables are able to access it though, and I’m able to track usage using nvidia-smi. Thank you so much!

I tried the headless driver, but it straight up refused to detect the NVIDIA GPU, giving the same error NVIDIA SMI cant communicate with GPU, please install drivers again (paraphrasing). So I switched back to @marcmuc’s solution.

Hi @vikky2904,

Try this

Bare Metal

sudo apt install -y --no-install-recommends \

nvidia-headless-410 nvidia-utils-410

or

sudo apt install -y --no-install-recommends \

nvidia-headless-418 nvidia-utils-418

Containers

sudo apt install -y --no-install-recommends \

nvidia-headless-no-dkms-410 nvidia-utils-410

or

sudo apt install -y --no-install-recommends \

nvidia-headless-no-dkms-418 nvidia-utils-418

Hi @stas

I tried before but the problem is nvidia-settings and nvidia-xconfig both depends on Xserver

But if somebody has a external cooling system can easly just use the headles, nvidia-smi and toolkit

Thank you for the feedback, @willismar. It appears that this is an almost pointless waste of time trying to move to nvidia-headless then, if one doesn’t really gain anything - let’s hope it’s just the beginning and they will eventually come around at having a full support for nvidia config w/o requiring xserver running on it.

Just for the record, even the dgx station I use at work has an x server (with xfce) running upon one of the teslas, which shoudn’t be, since it somewhat hinders that specific card, making it a bit slower than the other three.

I just read the whole thread, and it is quite interesting. It seems you guys made a lot of research, and I think you can help me with my personal setup.

I got a pc with ubuntu 18.04 and two 1080ti, while the display is driven by a tiny radeon 4550 on a x4 slot.

Now, I was wondering if I can gain manual control of the fans, but setting the coolbits seems to be a tricky job, since 18.04 does not even have an /etc/X11/xorg.conf, and if you use nvidia-xconfig to set the coolbits, it creates such file doing a mess (you have to ssh and remove it).

Gaining such control would be really useful for me, since one of my card regularly hits 89C during long unfrozen training sessions, while its fan scarcely exceeds 65%…

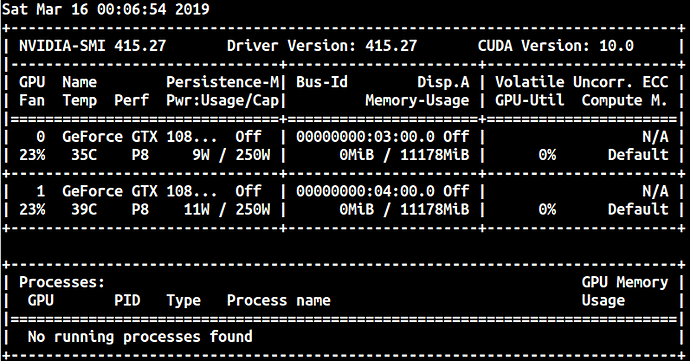

Speaking of temperatures, and since you mentioned power states, etc…, look a this:

One of my cards always uses more power than the other (it is the one running hot under load) even at total idle, no matter the two card being identical (FE). Is there any possible explanation of such behaviour?

Note also that both of the are in P8 at idle, while P0 would be desirable.

Thanks a lot I used the link ‘this guide’ in the first link you posted at the top of the topic and now I have this all install along with an update video card.

Some of my response were not quite the same as posted in the ‘this guide’ link which I will add here in due time. Thanks again this sorts of guidances are priceless in the amount of time they save

I reinstalled my system after I messed it up with using prime-select intel to select the onboard graphics card: https://askubuntu.com/questions/980875/isolate-integrated-intel-igpu-from-nvidia-gpu

Switching then back to the GPU resulted in no picture on the monitor.

Then, I reinstalled the Ubuntu and tried to install CUDA similar to this guide for TF: https://www.pugetsystems.com/labs/hpc/Install-TensorFlow-with-GPU-Support-the-Easy-Way-on-Ubuntu-18-04-without-installing-CUDA-1170/

(Also to avoid using the GPU for the system monitor) But I only got CUDA false.

Finally, I reinstalled the entire system with Ubuntu and CUDA in the traditional way (reinstall ubuntu, reinstall cuda, reinstall pytorch/fastai): https://docs.nvidia.com/cuda/cuda-installation-guide-linux/index.html

And now I have cuda.

Is this the recommended way for the current PyTorch/fastai version?

A working anaconda package would make life really a lot easier…

Now, I was wondering if I can gain manual control of the fans, but setting the coolbits seems to be a tricky job, since 18.04 does not even have an

/etc/X11/xorg.conf, and if you usenvidia-xconfigto set the coolbits, it creates such file doing a mess (you have to ssh and remove it).

I haven’t tried it, but check out:

Controlling GPU fan speed on a headless linux computer requires spoofing a display:

I found this while looking for a way to automatically switch Titan X to P12 when in idle, mine doesn’t go below P8, so it wastes power. But I didn’t find anything.

This worked for me too, with the minor tweak that the two blacklist files didn’t exist by default, so I had to create them and fill them with the contents you show in your post.

@marcmuc Thank you so much for your recipe with the out-of-the-box nvidia-settings-gui and the blacklist files. It has led me out of the nvidia valley of pain.

Many thanks!

Another confirmation that this is working on my machine too.

Similar to that someone has pointed out, those two files did not exist already so I just created them.

When you have time would you please explain a little bit why would this work?

I recently went through the process of setting up my gpu and found this thread very helpful. For those also who may stumble upon this thread in the future it seems like nvidia has a suggested way of doing this. In the FAQ section here https://docs.nvidia.com/cuda/cuda-installation-guide-linux/index.html#faq if you follow the instructions under “How can I tell X to ignore a GPU for compute-only use?” you’ll see nvidia recommends creating/editing the /etc/X11/xorg.conf file.

I added the following to my /etc/X11/xorg.conf and it has fixed the issue. I’m running ubuntu 18.04 with intel cpu/igpu

Section “Device”

Identifier “intel”

Driver “intel”

BusId “PCI:0:2:0”

EndSection