I have trained a model to detect tennis ball vs cricket ball. (Part1-Lecture 2)… now if I show it a picture of lets say a dog, I would like it to tell me … “I don’t know” instead of picking the “tallest amongst the midgets”.

You could use sigmoid with a threshold instead of softmax.

(see https://github.com/fastai/course-v3/blob/master/nbs/dl1/lesson3-planet.ipynb)

Thanks… just started off with Lesson 3… So I guess I asked the question too early!

experts, anyone willing to give a helping hand here. Even after going through Lesson 3 (which is multi class classification), I can’t find a way to come back to lesson 2 and my predictions above and make the system predict only if its really confident).

Someone suggested sigmoid with threshold however not sure how to change the lesson 2 code to do that.

I was hoping the predictions will give scores and they don’t!

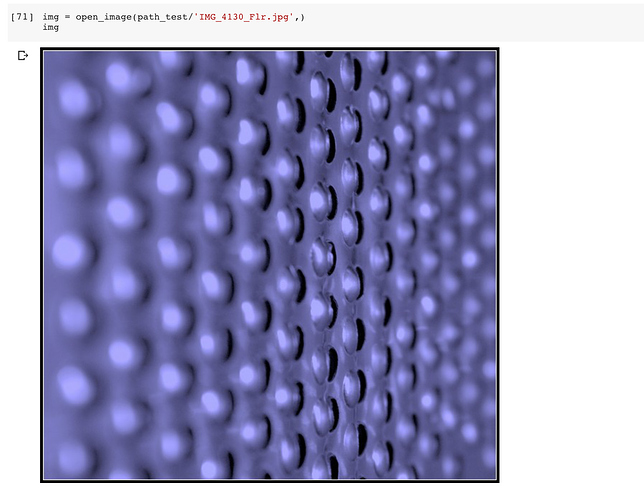

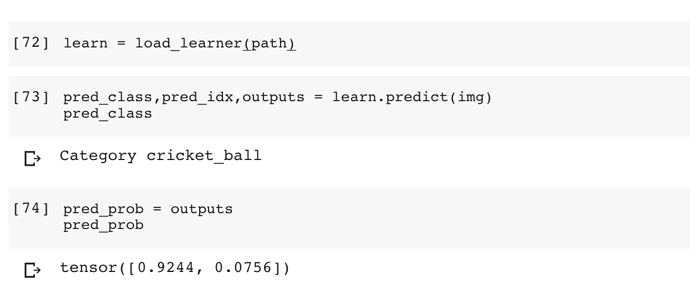

this was the image I tested

and prediction is pretty confident (and wrong)

Is it possible that my training set is too small?

I was thinking the same and I believe even if you include all tennis and cricket balls in the world on your training set your model will still give a prediction - it was designed to give answers anyway.

But maybe there’s a way to modify your network to include the “I’m not sure” class and use a threshold. That would be a multiclass problem - Tennis ball, cricket ball and I’m not sure.

I’m new to fastai so you should take a look at the documentation of the activation functions to understand how they convert the activations from previous layers. Softmax and sigmoid probably use a 0.5 threshold. You could write your custom activation by changing this value.

Will give it a try… if you find a way, let me know

I think, the problem is that you dont have any examples like this in your train set. So you only learn what tennisballs and cricketballs are, given that all that you are looking at is images of these two objects. What you could do is include random images (maybe imagenet or something similar) that do not include either of the two balls and give them labels 0,0

thanks… will try that

@jeremy: while going through lesson 10, I think I found the answer, but don’t have the skills currently to implement it.

I think the reason for the issue is that sigmoid will enlarge the probabilities and will result in skewed results. The way to handle it will be to use the last layer a “binomial” one instead of sigmoid and then run a if loop to say if one category is above 60% and all others are lower than 40% show that result else give multiple values and if all are really low then say “I don’t know”?

If the approach is correct, can you / someone guide me on how to implement it (way back in lesson 2 classification notebook  )

)

I wrote in a similar thread about the concepts of open-set recognition and open-world recognition in here open-set thread

I have a category I called " nothing" in my spectrogram classifications, see demo here https://manatee-chat-demo.appspot.com/ Two other categories of interest are “calls” and “mastication”.

It is basically the category for stuff (signals in my case) I am not interested in, something “other” than stuff I am interested in. After nearly a year of stumbling and bumbling around, I figured that I just need this category to be the largest in my training set. It is pretty good now, 95% on not previously seen spectrograms. Curiously I also found that if you will upload some random image there like a cat or some graph, it will classify it as " nothing" which is exactly what I need.

Hi kodzaks hope you are having a delightful day!

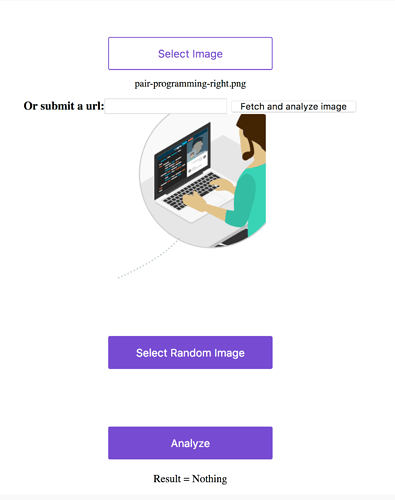

This sounds very interesting, I visited your website and put in some random images (see image below)

and indeed it classifies them as Nothing.

Well done a fantastic example of perseverance, I will try it with my next model.

Cheers mrfabulous1

Thank you! It is still work on progress, but I am getting there.

Hi saeedfb hope you are having a tremendous day!

It would be great if you could use the threads below as there are more people trying to solve this problem. Someone may have an Idea that can help you. Also you could be the one to help solve the problem.

Also in the above thread kodzaks seems to be having some success buy using an empty class.

Cheers mrfabulous1 ![]()

![]()

Hi muellerzr hope you are having a glorious day!

The two links below are fundamentally describing the same thing, what is the process, etiquette for combining them into one particular thread, if there is one?

This particular subject intrigues me, I would like posts on this subject posted to a central thread that everyone could use.

[Still Open] How to I tell my model to be honest and say “I don’t know”

cheers mrfabulous1 ![]()

![]()

@mrfabulous1 I’d make a big thread just with a link to all of them  (also I cover this in my study group coming up too

(also I cover this in my study group coming up too  ) along with the general method each describes and Maybe some ‘hot’ keywords for them to come up easily with!

) along with the general method each describes and Maybe some ‘hot’ keywords for them to come up easily with!

Cool I’ll be on that course!

Cheers mrfabulous1

Thanks @mrfabulous1! I did some research in the net. looks like it is possible to get the output of the neural net as a probability distrubution rather than a fixed number (category). The method is applied called Bayesian deep learning where you define uncertainty distribution to your model parameters and run the model several times. if the output probability from the model is low, then it can generate an output saying that it doesn’t know.

Would be great if the experts here elaborate more on the Bayesian techniques and how it could be integrated perhaps with a short course/notebook etc

There’s a few examples of this specifically right here

I’m playing around with both at the moment. I’ve tried applying a BCELossLogits approach (turn single class into multi) with good results and plan on playing with this next and perhaps combining both

would be great if you could share the results of both approach if find time. useful for many