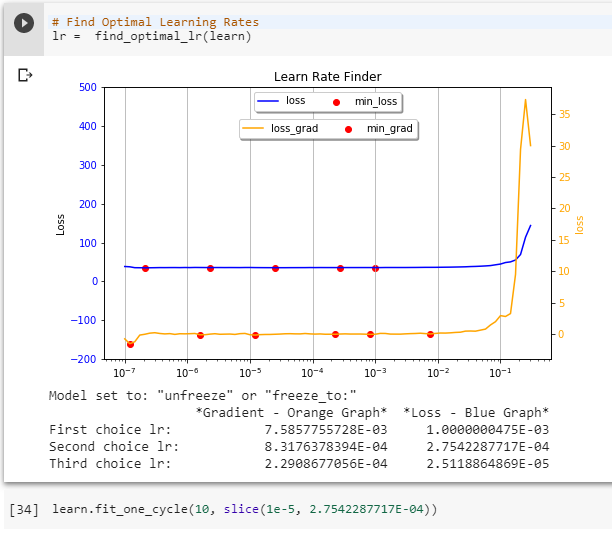

Thank you for pointing out the errors, Allan. I believe they are leftovers from my debugging frenzy.

In the mean time, I discovered another bug of my own:

Line 105: if is_unfreeze(learn):. The correct statement should be: if is_unfreeze(learner):

As it is, the method works because learn is a valid variable used elsewhere in the notebook, but if you lift the find_optimal_lr method for use in another notebook, it will fail.

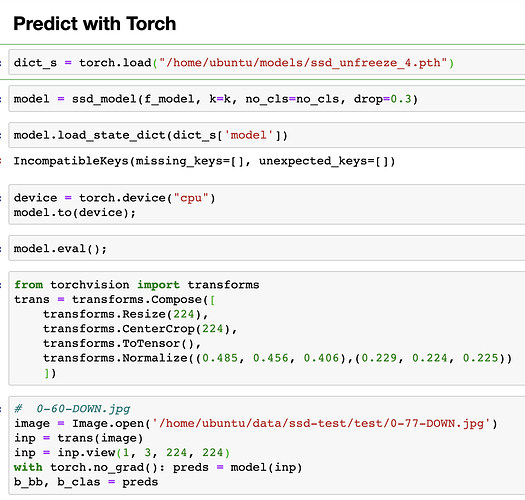

If you are interested in object detection, you’d know that SSD is fast and relatively accurate. However, it is not very good detecting small objects in images. I have been playing with the model and found that by adding a couple of extra conv2 layers to the layers that detect small objects, I can improve the model’s small object detection power. I call them “booster layers” .Try it. Replace the ssd_model method with this one:

class ssd_model(nn.Module):

def __init__(self, arch=models.resnet34, k=9 , drop=0.4, no_cls=21):

super().__init__()

self.k = k

self.body = create_body(arch)

self.drop = nn.Dropout(0.4) # it was 0.25

self.std_conv_0 = conv2_std_layer(num_features_model(self.body), 256, drop=drop,stride=1)

# Dimension-reducing layers

self.std_conv_1 = conv2_std_layer(256, 256, drop=drop, stride=2) # 4 by 4 layer

self.std_conv_1_1 = conv_layer(256, 256, stride=1) # Booster layer

self.std_conv_2 = conv2_std_layer(256, 256, drop=drop, stride=2) # 2 by 2 layer

self.std_conv_1_2 = conv_layer(256, 256, stride=1) # Booster layer

self.std_conv_3 = conv2_std_layer(256, 256, drop=drop, stride=2) # 1 by 1 layer

# SSD layers

self.ssd_conv_1 = conv2_ssd_layer(256, k=self.k, no_cls=no_cls)

self.ssd_conv_2 = conv2_ssd_layer(256, k=self.k, no_cls=no_cls)

self.ssd_conv_3 = conv2_ssd_layer(256, k=self.k, no_cls=no_cls)

def forward(self, xb):

xb = self.drop(F.relu(self.body(xb)))

xb = self.std_conv_0(xb)

xb = self.std_conv_1(xb)

xb = self.std_conv_1_1(xb)

bb1, cls1 = self.ssd_conv_1(xb) # 4 x 4

xb = self.std_conv_2(xb)

xb = self.std_conv_1_2(xb)

bb2, cls2 = self.ssd_conv_2(xb) # 2 x 2

xb = self.std_conv_3(xb)

bb3, cls3 = self.ssd_conv_3(xb) # 1 x 1

return [torch.cat([bb1, bb2, bb3], dim=1),

torch.cat([cls1, cls2, cls3], dim=1)]