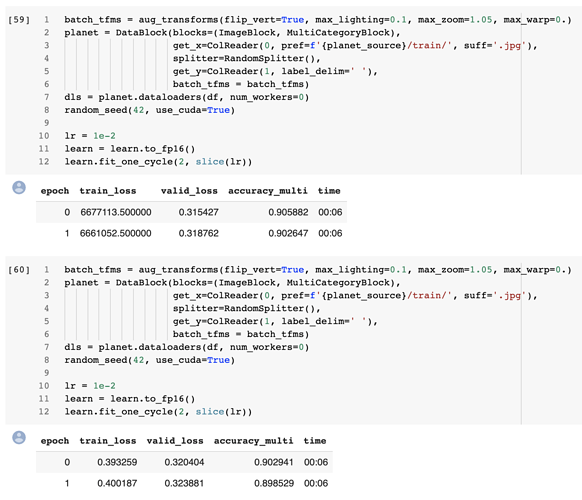

I would like to be confident of measures between different settings and models. However, even when initializing all the seeds I know about, training loss varies between identical runs.

def random_seed(seed_value, use_cuda): #gleaned from multiple forum posts

np.random.seed(seed_value) # cpu vars

torch.manual_seed(seed_value) # cpu vars

random.seed(seed_value) # Python

if use_cuda: torch.cuda.manual_seed_all(seed_value) # gpu

random_seed(42,True)

data = ImageDataBunch.from_csv(csv_labels=LABELS, suffix='.tif', path=TRAIN, ds_tfms=None, bs=BATCH_SIZE, size=96).normalize(imagenet_stats)

data.show_batch(rows=2, figsize=(96,96))

learn = create_cnn(data, arch, metrics=error_rate) #resnet34

lr = 1e-2

learn.fit_one_cycle(1, max_lr=lr)

The training losses for three runs are: .168973, .169944, .167258

Images displayed by show_batch appear to be in the same order and look identical (to the eye).

So what’s going on? It seems that if all the seeds are initialized, the results should be equal. A 1% variation over a single epoch is enough to affect my confidence in comparing various settings.

Could there be randomness in the GPU calculations? Or something related to CPU cores?

Thanks for any insight and advice.

fastai 1.0.30

Nvidia 1070