cwerner

November 12, 2018, 12:47pm

1

Hi.

I’m trying to implement Grad-CAM as nicely shown by @henripal and @MicPie here:https://forums.fast.ai/t/visualizing-intermediate-layers-a-la-zeiler-and-fergus/28140/25?u=cwerner

@henripal is using the learner to access an image batch

next(iter(learn.data.train_dl))

Now, in my case I don’t have that anymore, I load the model and do inference on an image (till now with predict()) like so:

MODEL = 'stage-2.pth'

path = Path("/tmp")

data = ImageDataBunch.single_from_classes(path, labels, tfms=get_transforms(max_warp=0.0), size=299).normalize(imagenet_stats)

learn = create_cnn(data, models.resnet50)

learn.model.load_state_dict(

torch.load("models/%s" % MODEL, map_location="cpu")

)

I setup the hooks as suggested in the post, but I fail to make it work with my setup… How do I go from my Image file to a tensor that fits the model (ResNet-50, 299px)?

In the example out = learn.model( img_tensor )is used …

I somehow need to go from my image to a tensor in the right format… Any kind soul know where I should look?

1 Like

henripal

November 12, 2018, 1:29pm

2

Can you share a minimum working example NB?

cwerner

November 12, 2018, 1:41pm

3

Yeah.

I’ll clean up my mess a bit and link it…

C

cwerner

November 12, 2018, 1:54pm

4

Here it is…

I’m not sure about the image loading (I did a hack, but this is not what I think is right).

inference_gradcam-Copy1.ipynb

{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Guitar Classifier - Inference and cam\n",

"\n",

"model and image: https://www.dropbox.com/sh/wy5uhhxmxghxci0/AADbPnM5-6PcJ1GvRlI6nbJOa?dl=0 \n",

"rough draft, based on: \n",show original

The model and test image are at this dropbox link:https://www.dropbox.com/sh/wy5uhhxmxghxci0/AADbPnM5-6PcJ1GvRlI6nbJOa?dl=0

henripal

November 12, 2018, 3:47pm

5

Here’s what I did to make it work:

Making sure that the tensor transformation is the correct one by just adding your image to the dataset

learn.data.valid_ds.set_item(img)

tensor_img = list(learn.dl())[0][0]

out = learn.model(tensor_img)

I’m not super sure but I think the adaptive pooling is messing with my hardcoded dimensions so I corrected the reshapes as follows:

_, n, w, h = gradients.shape

fmaps = fmap_hook.stored.cpu().numpy().reshape(n, w, h) # reshape activations

Here’s the full working example here:

guitar_gradcam.ipynb

{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Guitar Classifier - Inference and cam\n",

"\n",

"model and image: https://www.dropbox.com/sh/wy5uhhxmxghxci0/AADbPnM5-6PcJ1GvRlI6nbJOa?dl=0 \n",

"rough draft, based on: \n",show original

2 Likes

cwerner

November 12, 2018, 5:00pm

6

Oh wow. Super cool. Thanks for that…

Another silly question if you don’t mind. I try to get the original image to overlay it with the grad-cam result…

However, I’m not sure if I calculate it correctly from the tensor:

t = np.transpose(tensor_img.squeeze(), (1, 2, 0))

print(t.min(), t.max())

plt.imshow((t - t.min())/t.max())

The colors seem to be off. Not sure if the is actually a transformation or if I do not de-normalize correctly? Is there a more elegant solution for this?

henripal

November 12, 2018, 5:03pm

7

Your max is different after doing t - t.min() I think - you probably have some pixels with >1.0 values

1 Like

cwerner

November 12, 2018, 5:26pm

8

Sure thing. This is correct:

t = (t - t.min()) / (t.max() - t.min())

Thanks again for helping out

1 Like

cwerner

November 12, 2018, 9:18pm

9

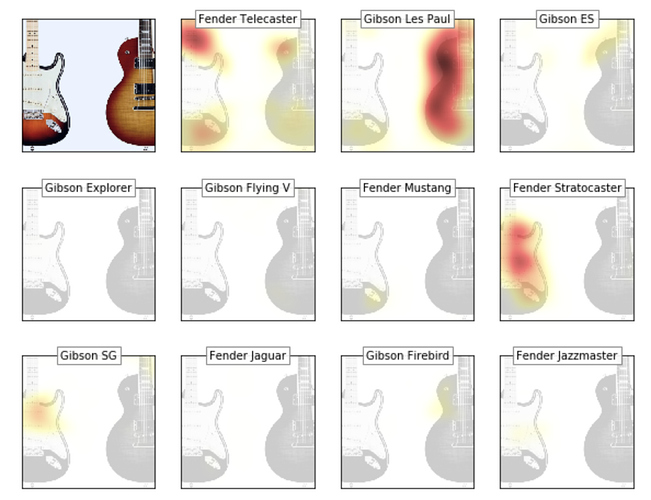

As a followup, here is the updated version:

inference_and_gradcam.ipynb

{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Guitar Classifier - Inference and cam\n",

"\n",

"rough draft, based on: \n",

"https://github.com/henripal/maps/blob/master/nbs/big_resnet50-interpret-gradcam.ipynb\n",show original

Unfortunately I am stuck again. In the second gist I try to get grad-CAMs for all 11 classes…

However, the CAMs I get look wrong. I guess something is up with the way I store backdrop results?

inference_and_gradcam_bad.ipynb

{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Guitar Classifier - Inference and cam\n",

"\n",

"rough draft, based on: \n",

"https://github.com/henripal/maps/blob/master/nbs/big_resnet50-interpret-gradcam.ipynb\n",show original