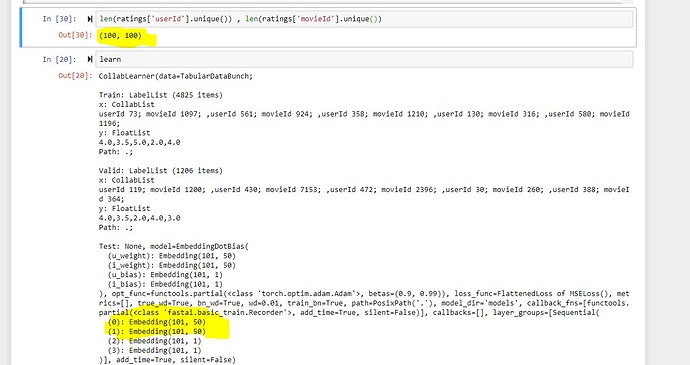

As shown in the highlighted parts of the image the number of elements in user id and movie id is 100 each. The width of the embedding matrix is 50 and its size is 101. But it should be 100 as the number of elements is 100.

Please explain the disparity in this.

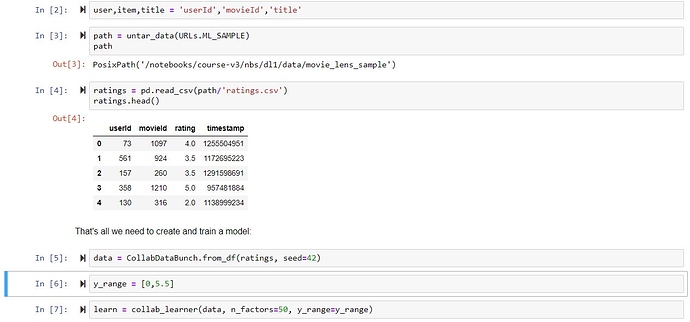

Can you please add the code you used to create the learn object?

Most likely this is due to an additional #na# column. This is added in case there was ever a time that particular category was missing. Hence 101, not 100

But the 100 is the number of separate elements in user column or in movie column and for each of those 100 elements there will be an “array” of numbers of size 50. So where does the #na# column fit in ?

So could you please elaborate a little more

Sure, let’s look at the adults tabular dataset, as it’s a good example I think to showcase how the embeddings are calculated/used. In the case of adults, our matrix looks like so:

(embeds): ModuleList(

(0): Embedding(10, 6)

(1): Embedding(17, 8)

(2): Embedding(8, 5)

(3): Embedding(16, 8)

(4): Embedding(7, 5)

(5): Embedding(6, 4)

(6): Embedding(3, 3)

So what I would expect is my categorical variables to have 10, 17, 8, etc for their specific cardinalities (how many unique values). However! If I run (after running cat_names one more time to not have my _na's) df[cat_names].nunique() I get:

workclass 9

education 16

marital-status 7

occupation 15

relationship 6

race 5

Which doesn’t align with our embedding matrix! Our matrix is one more! So why? The embedding matrix also takes one extra slot in case any of our categorical variables is never present, giving it a #na# tag along with it, hence why you are seeing n+1.

So just to clear it up :

Effectively a new category will be added to my variable which will hold true or false corresponding to if the column is empty or not , and if it is empty then the “array” of numbers corresponding to the newly made is empty or not variable will be chosen.

So is my interpretation right ?

That is correct.

Thanks a lot for explaining it to me !