I’ve been working on trying to scale up my model and have been making steady progress. But I’ve run into something that doesn’t seem right.

In my understanding, the following two training runs should produce equivalent results.

- 1 GPU, Batch Size = 160

- 8 GPUs, Batch Size = 20

From how I understand it, the gradients will be accumulated on each GPU and then summed together. So it shouldn’t matter whether it’s done on one GPU or spread across 8. (Is that right?)

Unfortunately, I’m getting worse accuracy with Distributed no matter the batch size I use.

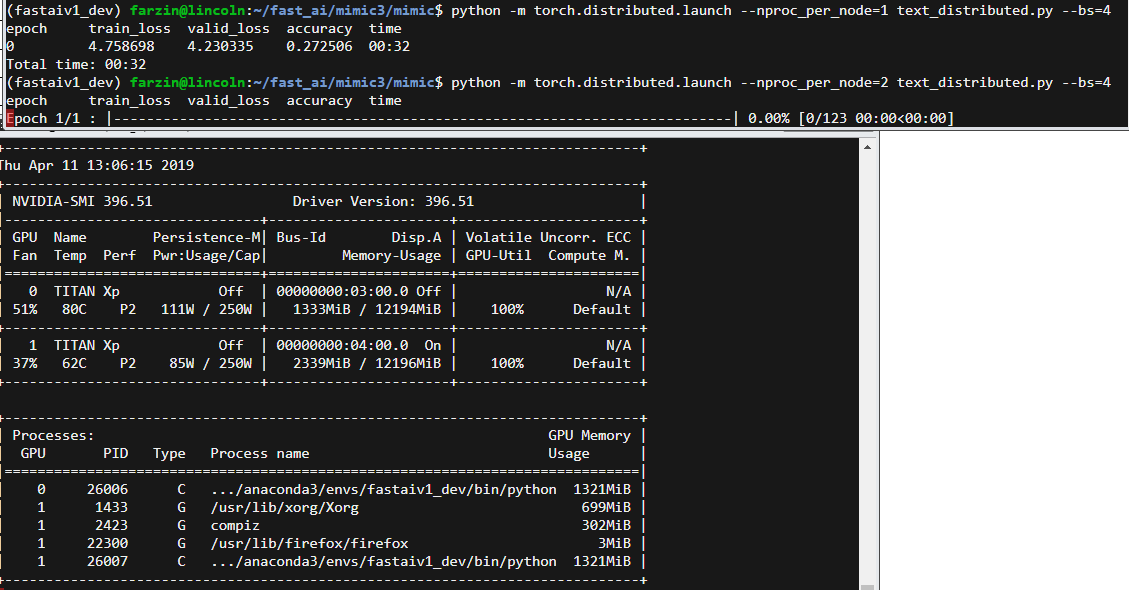

I’ve done several runs on this language model varying (only) the number of GPUs and Batch Size. I’m wondering if maybe there’s a bug (possibly with different Dropouts on each GPU? How would I check that?)

Number of GPUs Batch Size Accuracy after 1 Epoch

1 64 0.604424

1 160 0.619184

8 20 0.589825

8 40 0.589547

8 80 0.588944

8 140 0.588154

It seems weird to me that all of the 8-GPU runs got nearly exactly the same accuracy and that varying the batch size didn’t matter at all. And that the 8x20 wasn’t close to the 1x160. So trying to figure out what’s going wrong.

I did check that the GPU memory usage during the runs passed a sanity check (reducing batch size also reduced GPU memory in use).

Edit: I suppose one way to check the differing dropout hypothesis is to set a random seed. (Trying that now. Will know whether it helped in ~1 hour.)

Edit 2: Setting random seeds (np, torch, random, torch.cuda) didn’t work. Still got 0.584737 with 8 GPUs. Wondering if torch.cuda seed is per-process or global…

Edit 3: I feel pretty confident I’ve ruled out random seeds being an issue. I tried setting them to the current timestamp (in seconds) on every forward pass as well and that didn’t work either. Got 0.583825. Anyone have ideas of what else to try?

Edit 4: Shooting in the dark but going to try adjusting the drop_mult (dividing by number of GPUs) 0.596205