Created a simple Bird Sound Classifier based on Lesson 2.

The data is from: https://datadryad.org/resource/doi:10.5061/dryad.4g8b7/1

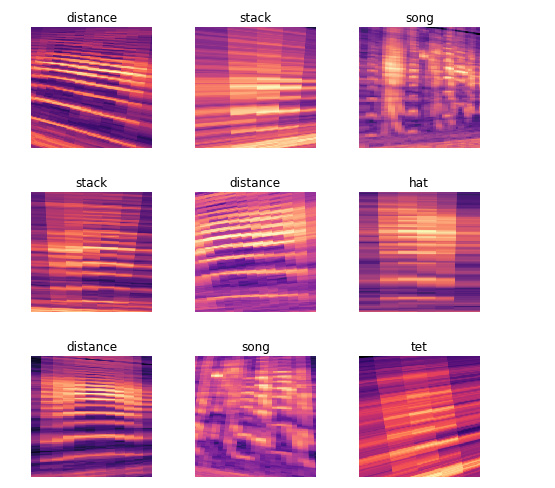

There are 6 types of bird calls: distance,hat,kackle,song,stack,tet.

This model gets around 80% accuracy, which is not bad at all for something that relies on so many different factors.

This is very useful for audio processing, as the audio itself can be converted into images!

This is the entire notebook: https://github.com/vishnubharadwaj00/BirdSoundClassifier