Hi,

I trained a model with street pictures from SF, NYC, Tokyo, and Paris. The error rate is pretty high (32%) but looking at what it gets correct and wrong is pretty interesting – it thinks SF’s Chinatown is Tokyo and it seems to associate Paris with beige.

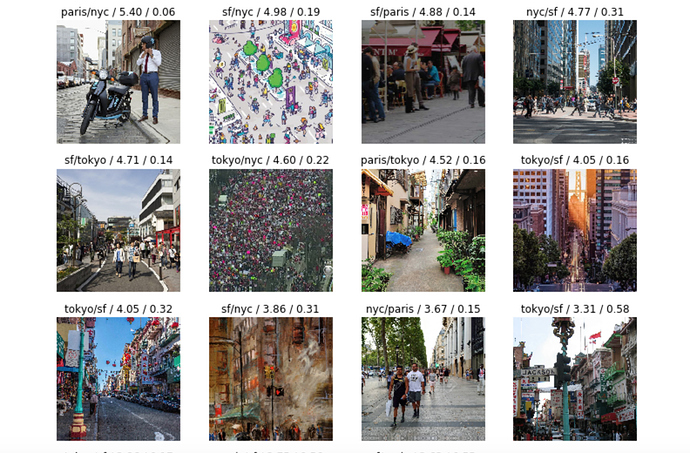

Top loss:

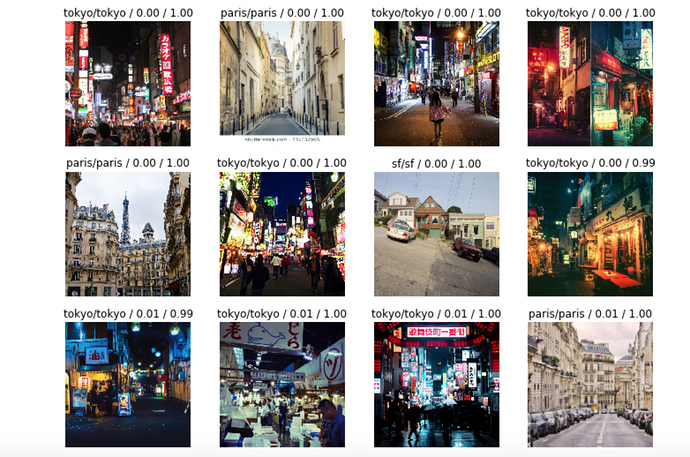

Top correct:

Notebook here.

Any suggestions to improve welcome!!