Since I wasn’t super intimidated at all by the amazing SG projects and not at all because I was looking for a simple, 1-click setup and deploy idea:

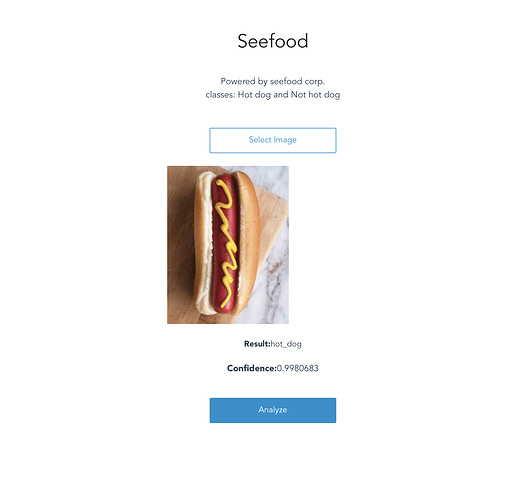

I made a Not hot dog app using seeme.ai, thanks to @zerotosingularity for creating the platform!

Testing:

Ok, that’s a hot dog!

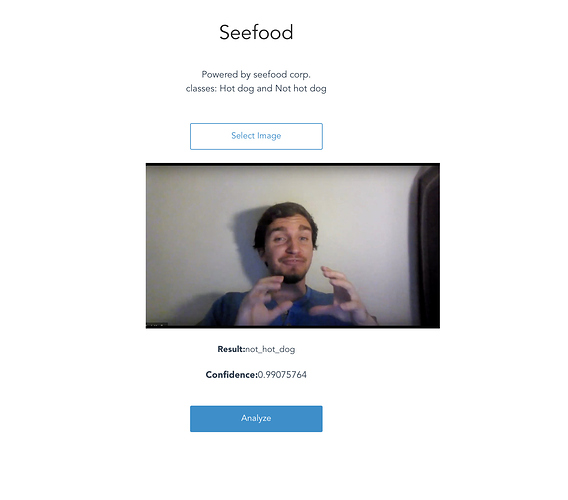

And @muellerzr is not. Hmm

I think I did some good homework