Hi

I am trying to learn from video tutorials (which use fastai 0.7), but with fastai 1.0, as that already out, so want to learn with latest library. Obviously this leads to various obstacles in learning, as I constantly have to understand each v0.7 function in tutorial in light of v1.0 function. That said, for last layer (default) SGD warm resets, I am getting a wrong weird result at least as per my understanding. fit_sgd_warm is directly from latest fastai v1.0 docs.

Code:

from fastai.callbacks import *

def fit_sgd_warm(learn, n_cycles, lr, mom, cycle_len, cycle_mult):

n = len(learn.data.train_dl)

phases = [TrainingPhase(n * (cycle_len * cycle_mult**i), lr, mom, lr_anneal=annealing_cos) for i in range(n_cycles)]

sched = GeneralScheduler(learn, phases)

learn.callbacks.append(sched)

if cycle_mult != 1:

total_epochs = int(cycle_len * (1 - (cycle_mult)**n_cycles)/(1-cycle_mult))

else: total_epochs = n_cycles * cycle_len

learn.fit(total_epochs)

fit_sgd_warm(learn_a, 3, 1e-3, 0.9, 1, 1)

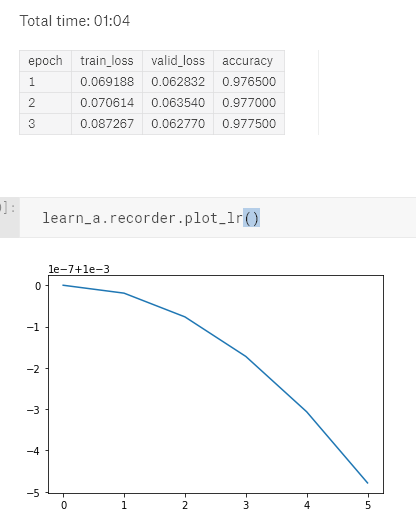

Output:

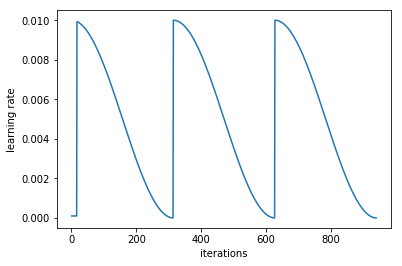

But I should be getting something like below, because n_cycles=3

I expect above output, because in the tutorial, in section talking about Improving the model using SGD with restarts (snapshot ensemble) this is what expected. Here is the v0.7 notebook of the tutorial for reference.