wildman

(John)

1

Hello,

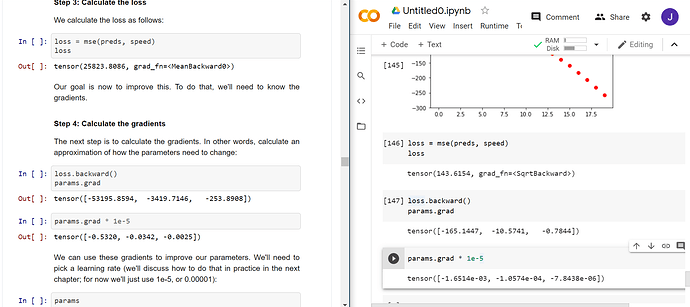

I’m working through the Stochastic Gradient Descent example in Chapter 4. It was working well until I got to calculating the loss and the gradients.

I’ve been through it a few times and I have copied across the code to Colab so I don’t think there are any typos.

My output deviates from the fastbook example - see attached image. Any ideas, please?

Much appreciated.

Hi! Since the parameters are initialized as random numbers…

params = torch.randn(3).requires_grad_()

…, your output is expected to be different than in the book. As long as the loss decreases when you apply apply_step a few times, you’re good

wildman

(John)

3

Great, thanks Johannes  I must have missed that !

I must have missed that !

Cheers.