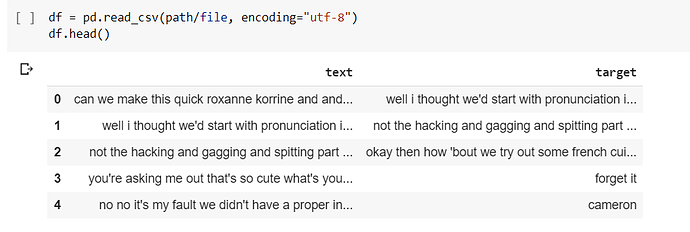

So I’m trying to make a chatbot essentially using the lesson 8 notebook from the NLP course (here). I haven’t really changed any of the driving code, just the input file. Here is a sample, its from the Cornell movie dialogue corpus.

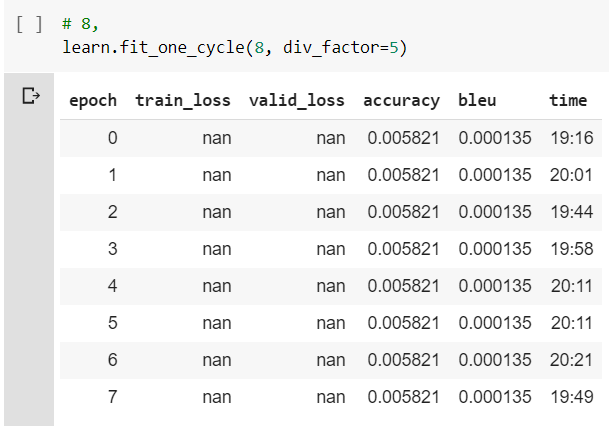

So essentially I just thought I could use the transformer model to train on the corpus, and it would work fine after a bit of training, but while training, it never improved, and also the

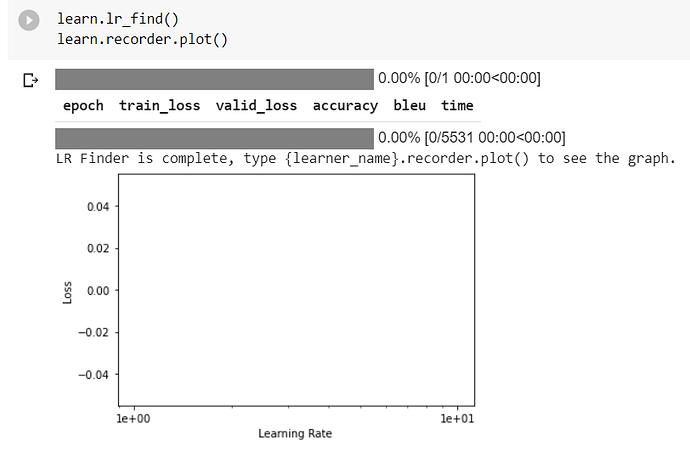

.lr_find() didn’t work so that may be related to the problem.

Also this is all done on Google colab with GPU acceleration with v1.0.61.