Hi guys,

I’m trying to write simple text generator (like Jeremy did in lesson 4th) but with using SentencePieceTokenizer. The problem is that during creating DataLoader I always get an error:

RuntimeError: Internal: src/trainer_interface.cc(429) [!sentences_.empty()]

(Full stacktrace you can find at the end of this post).

My code is:

from fastai.text.all import *

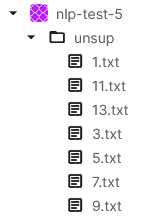

path = '/kaggle/input/nlp-test-5' # contains single folder called 'unsup'

files = partial(get_text_files, folders=['unsup'])

tokenizer = SentencePieceTokenizer(lang='en', special_toks=[])

dls_lm = DataBlock(

blocks=TextBlock.from_folder('/kaggle/working', tok=tokenizer, is_lm=True),

get_items=files,

splitter=RandomSplitter(0.1)

).dataloaders(path, path=path, bs=64, seq_len=80)

Unfortunatelly it’s print error:

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

Cell In[8], line 11

6 files = partial(get_text_files, folders=['unsup'])

8 tokenizer = SentencePieceTokenizer(lang='en', special_toks=[])

10 dls_lm = DataBlock(

---> 11 blocks=TextBlock.from_folder('/kaggle/working', tok=tokenizer, is_lm=True),

12 get_items=files,

13 splitter=RandomSplitter(0.1)

14 ).dataloaders(path, path=path, bs=64, seq_len=80)

16 learn = language_model_learner(dls_lm,

17 AWD_LSTM,

18 pretrained=False,

19 drop_mult=0.2,

20 metrics=[accuracy],

21 pretrained_fnames=['model']).to_fp16()

File /opt/conda/lib/python3.10/site-packages/fastai/text/data.py:242, in TextBlock.from_folder(cls, path, vocab, is_lm, seq_len, backwards, min_freq, max_vocab, **kwargs)

238 @classmethod

239 @delegates(Tokenizer.from_folder, keep=True)

240 def from_folder(cls, path, vocab=None, is_lm=False, seq_len=72, backwards=False, min_freq=3, max_vocab=60000, **kwargs):

241 "Build a `TextBlock` from a `path`"

--> 242 return cls(Tokenizer.from_folder(path, **kwargs), vocab=vocab, is_lm=is_lm, seq_len=seq_len,

243 backwards=backwards, min_freq=min_freq, max_vocab=max_vocab)

File /opt/conda/lib/python3.10/site-packages/fastai/text/core.py:281, in Tokenizer.from_folder(cls, path, tok, rules, **kwargs)

279 path = Path(path)

280 if tok is None: tok = WordTokenizer()

--> 281 output_dir = tokenize_folder(path, tok=tok, rules=rules, **kwargs)

282 res = cls(tok, counter=load_pickle(output_dir/fn_counter_pkl),

283 lengths=load_pickle(output_dir/fn_lengths_pkl), rules=rules, mode='folder')

284 res.path,res.output_dir = path,output_dir

File /opt/conda/lib/python3.10/site-packages/fastai/text/core.py:186, in tokenize_folder(path, extensions, folders, output_dir, skip_if_exists, **kwargs)

184 files = get_files(path, extensions=extensions, recurse=True, folders=folders)

185 def _f(i,output_dir): return output_dir/files[i].relative_to(path)

--> 186 return _tokenize_files(_f, files, path, skip_if_exists=skip_if_exists, **kwargs)

File /opt/conda/lib/python3.10/site-packages/fastai/text/core.py:169, in _tokenize_files(func, files, path, output_dir, output_names, n_workers, rules, tok, encoding, skip_if_exists)

166 rules = partial(Path.read_text, encoding=encoding) + L(ifnone(rules, defaults.text_proc_rules.copy()))

168 lengths,counter = {},Counter()

--> 169 for i,tok in parallel_tokenize(files, tok, rules, n_workers=n_workers):

170 out = func(i,output_dir)

171 out.mk_write(' '.join(tok), encoding=encoding)

File /opt/conda/lib/python3.10/site-packages/fastai/text/core.py:150, in parallel_tokenize(items, tok, rules, n_workers, **kwargs)

148 "Calls optional `setup` on `tok` before launching `TokenizeWithRules` using `parallel_gen"

149 if tok is None: tok = WordTokenizer()

--> 150 if hasattr(tok, 'setup'): tok.setup(items, rules)

151 return parallel_gen(TokenizeWithRules, items, tok=tok, rules=rules, n_workers=n_workers, **kwargs)

File /opt/conda/lib/python3.10/site-packages/fastai/text/core.py:369, in SentencePieceTokenizer.setup(self, items, rules)

367 for t in progress_bar(maps(*rules, items), total=len(items), leave=False):

368 f.write(f'{t}\n')

--> 369 sp_model = self.train(raw_text_path)

370 self.tok = SentencePieceProcessor()

371 self.tok.Load(str(sp_model))

File /opt/conda/lib/python3.10/site-packages/fastai/text/core.py:353, in SentencePieceTokenizer.train(self, raw_text_path)

351 vocab_sz = self._get_vocab_sz(raw_text_path) if self.vocab_sz is None else self.vocab_sz

352 spec_tokens = ['\u2581'+s for s in self.special_toks]

--> 353 SentencePieceTrainer.Train(" ".join([

354 f"--input={raw_text_path} --vocab_size={vocab_sz} --model_prefix={self.cache_dir/'spm'}",

355 f"--character_coverage={self.char_coverage} --model_type={self.model_type}",

356 f"--unk_id={len(spec_tokens)} --pad_id=-1 --bos_id=-1 --eos_id=-1 --minloglevel=2",

357 f"--user_defined_symbols={','.join(spec_tokens)} --hard_vocab_limit=false"]))

358 raw_text_path.unlink()

359 return self.cache_dir/'spm.model'

File /opt/conda/lib/python3.10/site-packages/sentencepiece/__init__.py:989, in SentencePieceTrainer.Train(arg, logstream, **kwargs)

986 @staticmethod

987 def Train(arg=None, logstream=None, **kwargs):

988 with _LogStream(ostream=logstream):

--> 989 SentencePieceTrainer._Train(arg=arg, **kwargs)

File /opt/conda/lib/python3.10/site-packages/sentencepiece/__init__.py:945, in SentencePieceTrainer._Train(arg, **kwargs)

943 """Train Sentencepiece model. Accept both kwargs and legacy string arg."""

944 if arg is not None and type(arg) is str:

--> 945 return SentencePieceTrainer._TrainFromString(arg)

947 def _encode(value):

948 """Encode value to CSV.."""

File /opt/conda/lib/python3.10/site-packages/sentencepiece/__init__.py:923, in SentencePieceTrainer._TrainFromString(arg)

921 @staticmethod

922 def _TrainFromString(arg):

--> 923 return _sentencepiece.SentencePieceTrainer__TrainFromString(arg)

RuntimeError: Internal: src/trainer_interface.cc(429) [!sentences_.empty()]

If I throw out SentencePieceTokenizer , the dataloader has been created properly and show_batch() function prints tokens.

My input is several txt files, each containing several sentences - each sentence ends with a dot (.) and is put on a separate line. The newline character is the UNIX character LF.

None of the files are larger than 300B.

Structure of input data:

Can anyone help me with this issue? I tried google it but there is only few links about sentences_.empty() and none of them help me ![]()